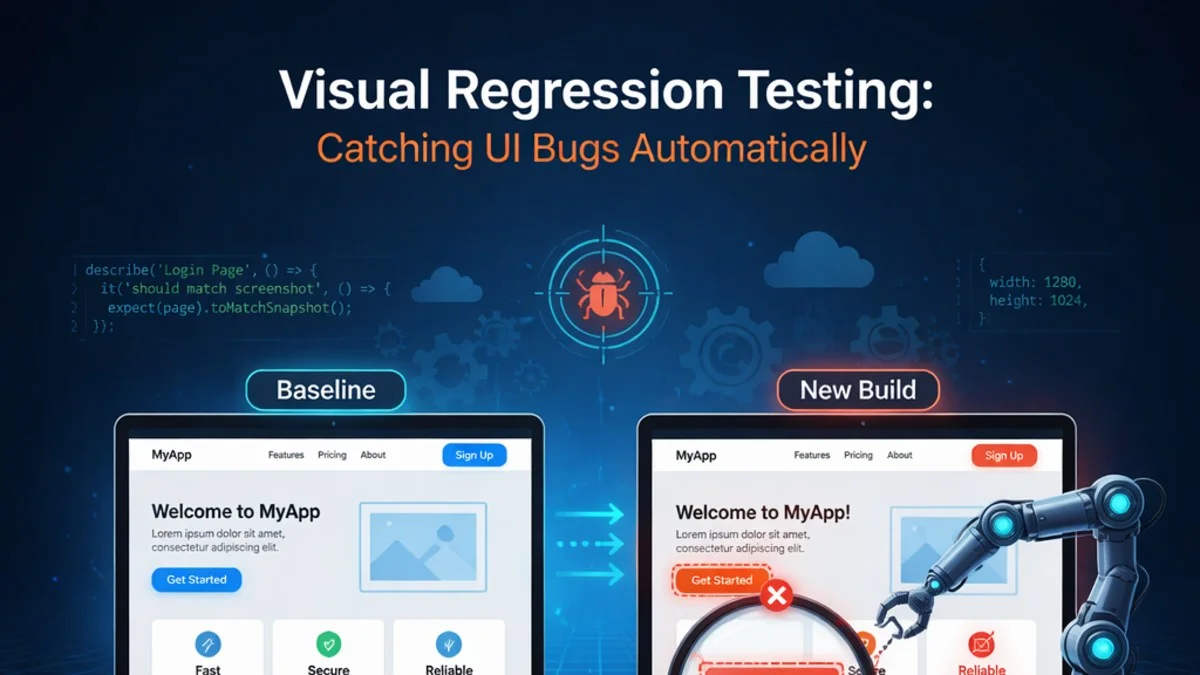

The Problem Visual Regression Testing Solves

Your team ships a CSS refactor. The intent is to clean up some technical debt in your stylesheet. The tests pass. The PR is reviewed and merged. Two days later, a customer emails to say the invoice table is completely broken on their screen—columns overlapping, text cut off, buttons invisible against the background.

The bug was introduced by a specificity change that only affected tables with more than 10 rows inside a certain layout wrapper. No unit test catches that. No integration test catches that. The only way to catch it is to look at the UI.

Visual regression testing automates that looking.

How Visual Regression Testing Works

The mechanics are straightforward:

- Capture screenshots of your UI in a known-good state (the baseline)

- After each change, capture screenshots again

- Compare the new screenshots against the baseline pixel by pixel

- Flag differences for human review

The complexity is in managing false positives (dynamic content, animations, font rendering differences) and in establishing a workflow that makes reviewing differences fast and trustworthy.

Percy: The Easiest Way to Get Started

Percy (by BrowserStack) is the most widely used visual regression service. It integrates with your existing E2E or component tests and handles screenshot comparison in the cloud.

Integrate Percy with Playwright:

import { percySnapshot } from '@percy/playwright';

import { test } from '@playwright/test';

test('invoice list renders correctly', async ({ page }) => {

await page.goto('/admin/invoices');

// Wait for data to load

await page.waitForSelector('[data-testid="invoice-table"]');

// Capture a snapshot

await percySnapshot(page, 'Invoice List - Default State');

});

test('invoice list shows empty state', async ({ page }) => {

// Log in as client with no invoices

await page.goto('/portal/invoices');

await page.waitForSelector('[data-testid="empty-state"]');

await percySnapshot(page, 'Invoice List - Empty State');

});

test('invoice detail with overdue badge', async ({ page }) => {

await page.goto('/admin/invoices/42');

await page.waitForSelector('[data-testid="invoice-detail"]');

await percySnapshot(page, 'Invoice Detail - Overdue');

});

Run with Percy:

PERCY_TOKEN=your_token npx percy exec -- npx playwright test

Percy uploads screenshots to its service, compares them to the baseline, and posts results to your PR. Reviewers see a side-by-side diff of every visual change.

Playwright Visual Comparisons (Self-Hosted)

If you'd rather not use a third-party service, Playwright has built-in screenshot comparison:

test('dashboard renders correctly', async ({ page }) => {

await page.goto('/admin/dashboard');

await page.waitForLoadState('networkidle');

// First run: creates baseline screenshots

// Subsequent runs: compares against baseline

await expect(page).toHaveScreenshot('dashboard.png', {

maxDiffPixelRatio: 0.02, // Allow 2% pixel difference

threshold: 0.1, // Per-pixel color threshold

});

});

Playwright stores baseline screenshots in a __screenshots__ directory. Commit these to version control. When a screenshot changes, Playwright fails the test and generates a diff image.

To update baselines after an intentional change:

npx playwright test --update-snapshots

This approach requires no external service but needs more configuration to handle dynamic content.

Handling Dynamic Content

The biggest challenge in visual regression testing is dynamic content: dates, random data, loading states, animations. These change between runs and produce false positives.

Strategies to handle dynamic content:

Mask dynamic regions:

await expect(page).toHaveScreenshot('invoice-list.png', {

mask: [

page.locator('[data-testid="current-date"]'),

page.locator('[data-testid="last-login"]'),

],

});

Masked regions are replaced with a solid color in both screenshots, eliminating false positives from time-based content.

Freeze time in your application:

// Override Date in the browser to freeze time

await page.addInitScript(() => {

const frozenDate = new Date('2026-01-15T12:00:00Z');

Date = class extends Date {

constructor(...args) {

if (args.length === 0) super(frozenDate);

else super(...args);

}

static now() { return frozenDate.getTime(); }

};

});

Use stable test data: Seed your test database with fixed data rather than factory-generated data for visual tests. Visual tests should always render the same data.

Disable animations:

// Add to your test setup

await page.addStyleTag({

content: `

*, *::before, *::after {

animation-duration: 0s !important;

transition-duration: 0s !important;

}

`

});

Component-Level Visual Testing with Storybook

Page-level screenshots are coarse. A single page might contain dozens of components, and a failed screenshot doesn't tell you which component caused the regression.

Component-level visual testing (using Storybook + Chromatic or Storybook + Percy) tests individual components in isolation:

// InvoiceStatusBadge.stories.js

export default {

title: 'Components/InvoiceStatusBadge',

component: InvoiceStatusBadge,

};

export const Paid = {

args: { status: 'paid' },

};

export const Overdue = {

args: { status: 'overdue' },

};

export const Draft = {

args: { status: 'draft' },

};

Chromatic (Storybook's visual testing service) automatically screenshots every story and compares them to baselines. When a designer changes the badge styling, Chromatic shows exactly which states changed.

This approach scales much better than page-level tests: you get coverage of every component in every state, not just the states that appear on pages you happen to test.

Responsive Testing

Visual regressions often appear only at specific viewport sizes. Cover the viewports your users actually use:

const VIEWPORTS = [

{ name: 'mobile', width: 390, height: 844 },

{ name: 'tablet', width: 768, height: 1024 },

{ name: 'desktop', width: 1440, height: 900 },

];

for (const viewport of VIEWPORTS) {

test(`invoice list - ${viewport.name}`, async ({ page }) => {

await page.setViewportSize(viewport);

await page.goto('/admin/invoices');

await page.waitForSelector('[data-testid="invoice-table"]');

await expect(page).toHaveScreenshot(

`invoice-list-${viewport.name}.png`

);

});

}

Multiplying your test count by three viewport sizes is manageable; the screenshots are fast to capture and the comparisons are automatic.

Setting Up Review Workflows

Visual regression testing only works if the review process is fast. When screenshots change, someone needs to look at the diff and decide: intentional change (approve and update baseline) or regression (reject and fix).

Good tooling makes this a 30-second decision per change. Percy and Chromatic both have excellent diff UIs that show before/after with highlighted differences. The review workflow should be:

- PR is opened

- Visual tests run and screenshots are compared

- Changed screenshots appear in the PR review

- Reviewer approves intentional visual changes

- PR merges only when all visual changes are approved

Make visual approval a required PR check for pages and components that receive significant visual work.

What to Snapshot and What to Skip

Don't try to snapshot everything. Focus on:

High-value snapshots:

- Key user-facing pages (dashboard, invoice view, project overview)

- Component states that are hard to test otherwise (hover, error, empty)

- Responsive layouts that have broken before

- Print stylesheets for PDF exports

Skip or be careful with:

- Pages with lots of dynamic content (high noise)

- Admin forms with many fields (high maintenance)

- Pages that change frequently by design

Practical Takeaways

- Visual regression testing catches CSS bugs that unit and integration tests completely miss

- Percy is the easiest starting point; Playwright's built-in comparisons work well for self-hosted setups

- Mask or freeze dynamic content to eliminate false positives

- Component-level testing with Storybook + Chromatic scales better than page-level tests for large UIs

- Test at multiple viewport sizes; regressions often appear only on specific screen widths

- Keep the review workflow fast; visual testing only adds value if approvals happen quickly

Need help building reliable systems? We help teams architect software that scales. scopeforged.com