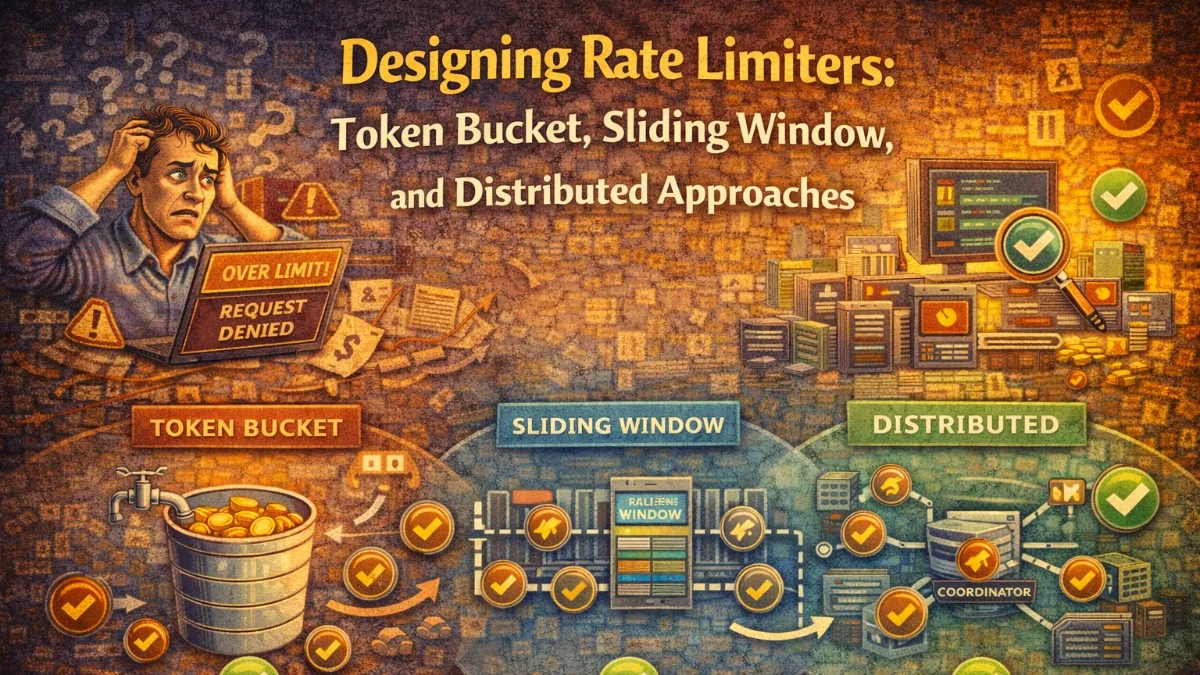

Rate limiting is one of those features that looks simple until you implement it seriously. A naive counter in Redis seems to work fine until you discover the fixed window boundary attack. You switch to sliding windows and realize the memory cost is prohibitive at scale. You reach for a token bucket and find that it requires careful clock synchronization in a distributed cluster.

This post covers the three main algorithms, their tradeoffs, and how to implement rate limiting correctly when your application runs on multiple servers.

Why Rate Limiting Matters

Rate limiting serves several purposes:

- Availability: Prevent a single user or bot from consuming all available resources.

- Cost control: Downstream API calls, database queries, and compute have real costs.

- Fairness: Ensure all users get reasonable access rather than one aggressive client starving others.

- Security: Slow down brute-force attacks against authentication endpoints.

- Business enforcement: Enforce usage tiers in a paid API product.

The algorithm you choose depends on which of these goals matters most for a given endpoint.

Algorithm 1: Fixed Window Counter

The simplest approach: count requests in fixed time windows (e.g., per minute). Reset the counter at the start of each window.

Window: 09:00:00 - 09:01:00

Requests allowed: 100

Counter: 73

Status: ALLOWED

Implementation

class FixedWindowRateLimiter

{

public function __construct(private readonly Redis $redis) {}

public function isAllowed(string $key, int $limit, int $windowSeconds): bool

{

$windowKey = $key . ':' . floor(time() / $windowSeconds);

$count = $this->redis->incr($windowKey);

if ($count === 1) {

// First request in this window, set expiry

$this->redis->expire($windowKey, $windowSeconds * 2);

}

return $count <= $limit;

}

}

The Boundary Attack Problem

Fixed windows have a critical flaw. If your limit is 100 requests per minute, a client can send 100 requests at 09:00:59 and 100 more at 09:01:01. That's 200 requests in 2 seconds, doubling the effective rate limit at every window boundary.

This makes fixed windows unsuitable for strict rate limiting on endpoints where abuse is likely.

When to Use It

Fixed windows are appropriate when:

- You need simple, low-overhead counting

- The boundary attack is not a meaningful threat (e.g., internal tooling)

- You want predictable reset times (users can see exactly when their limit resets)

Algorithm 2: Sliding Window Log

Instead of counting per window, record the timestamp of every request. To check the limit, count timestamps within the last N seconds.

class SlidingWindowLogRateLimiter

{

public function __construct(private readonly Redis $redis) {}

public function isAllowed(string $key, int $limit, int $windowSeconds): bool

{

$now = microtime(true);

$windowStart = $now - $windowSeconds;

$this->redis->zremrangebyscore($key, '-inf', $windowStart);

$count = $this->redis->zcard($key);

if ($count < $limit) {

$this->redis->zadd($key, $now, $now . '-' . uniqid());

$this->redis->expire($key, $windowSeconds + 1);

return true;

}

return false;

}

}

This uses a Redis sorted set where the score is the request timestamp. Expired entries are pruned before each check.

The Memory Problem

Sliding window log is accurate but memory-intensive. Each allowed request stores a timestamp entry. For a 1,000 req/min limit with 10,000 active users, you're storing up to 10 million entries in Redis at any given time.

For high-traffic systems or generous limits, this becomes impractical.

When to Use It

- Low-volume endpoints where accuracy is critical

- Security-sensitive endpoints (authentication, password reset)

- When you need sub-second precision

Algorithm 3: Sliding Window Counter

A hybrid that approximates the sliding window without storing individual timestamps. It maintains counters for the current and previous windows and weights them by how much of the previous window overlaps with the current sliding window.

Previous window count: 80 (full minute, ending 30 seconds ago)

Current window count: 40 (current minute, 30 seconds elapsed)

Overlap fraction: 0.5 (50% of the previous window is in our 60s window)

Weighted count = (80 * 0.5) + 40 = 80

class SlidingWindowCounterRateLimiter

{

public function __construct(private readonly Redis $redis) {}

public function isAllowed(string $key, int $limit, int $windowSeconds): bool

{

$now = time();

$currentWindowStart = floor($now / $windowSeconds) * $windowSeconds;

$previousWindowStart = $currentWindowStart - $windowSeconds;

$currentKey = $key . ':' . $currentWindowStart;

$previousKey = $key . ':' . $previousWindowStart;

[$currentCount, $previousCount] = $this->redis->mget([$currentKey, $previousKey]);

$currentCount = (int) ($currentCount ?? 0);

$previousCount = (int) ($previousCount ?? 0);

// How far through the current window are we?

$elapsed = $now - $currentWindowStart;

$previousWeight = 1 - ($elapsed / $windowSeconds);

$weightedCount = ($previousCount * $previousWeight) + $currentCount;

if ($weightedCount < $limit) {

$this->redis->incr($currentKey);

$this->redis->expire($currentKey, $windowSeconds * 2);

return true;

}

return false;

}

}

This approach uses O(1) memory per user per window (just two counters) and is accurate to within about 0.1% in most scenarios, which is more than sufficient for rate limiting.

Cloudflare published a study showing they use this algorithm to rate limit at the edge with acceptable accuracy and minimal memory overhead.

Algorithm 4: Token Bucket

The token bucket algorithm models each client as having a bucket that fills with tokens at a fixed rate. Each request consumes one token. If the bucket is empty, the request is rejected.

Key parameters:

- Capacity: Maximum tokens (burst allowance)

- Refill rate: Tokens added per second

class TokenBucketRateLimiter

{

public function __construct(private readonly Redis $redis) {}

public function isAllowed(

string $key,

int $capacity,

float $refillRate, // tokens per second

): bool {

$now = microtime(true);

$bucketKey = 'bucket:' . $key;

// Atomic Lua script to avoid race conditions

$script = <<<'LUA'

local key = KEYS[1]

local capacity = tonumber(ARGV[1])

local refillRate = tonumber(ARGV[2])

local now = tonumber(ARGV[3])

local bucket = redis.call('HMGET', key, 'tokens', 'last_refill')

local tokens = tonumber(bucket[1]) or capacity

local lastRefill = tonumber(bucket[2]) or now

-- Calculate tokens to add since last refill

local elapsed = now - lastRefill

local tokensToAdd = elapsed * refillRate

tokens = math.min(capacity, tokens + tokensToAdd)

if tokens >= 1 then

tokens = tokens - 1

redis.call('HMSET', key, 'tokens', tokens, 'last_refill', now)

redis.call('EXPIRE', key, 3600)

return 1

else

redis.call('HMSET', key, 'tokens', tokens, 'last_refill', now)

redis.call('EXPIRE', key, 3600)

return 0

end

LUA;

$result = $this->redis->eval(

$script,

[$bucketKey, $capacity, $refillRate, $now],

1

);

return $result === 1;

}

}

The Lua script is critical here. It ensures the read-modify-write of the bucket is atomic, preventing race conditions in concurrent environments.

Token Bucket Advantages

- Burst allowance: A client that hasn't made requests for a while can burst up to

capacityrequests. - Smooth refill: Tokens accumulate continuously, not in discrete windows.

- Intuitive parameters: Easy to reason about sustained vs. burst rates.

This makes token bucket ideal for APIs where you want to allow reasonable bursts (e.g., a mobile app syncing data after reconnecting) but still enforce an average rate.

Algorithm 5: Leaky Bucket

Leaky bucket processes requests at a fixed rate, queuing excess requests rather than rejecting them. Think of it as a queue with a constant drain rate.

Leaky bucket is less common for HTTP rate limiting because it introduces latency for queued requests. It's more appropriate for internal queuing systems or when you want to smooth out bursty traffic rather than reject it.

Distributed Rate Limiting

When your application runs on multiple servers, a local in-memory counter on each server is useless. Server A might allow 100 requests while server B allows another 100, giving the client 200 total.

You need a shared state store. Redis is the standard choice.

Race Conditions

The naive Redis approach has a race condition:

Server A reads count: 99

Server B reads count: 99

Server A increments to 100 (allowed)

Server B increments to 100 (allowed, but this is the 101st request!)

Solve this with Redis atomic operations. For simple counters, INCR is atomic. For complex operations (like token bucket), use Lua scripts which execute atomically on the Redis server.

Redis Cluster Considerations

If you're using Redis Cluster, all keys for a single rate limit check must land on the same shard. Use hash tags to force colocation:

// Without hash tag: may land on different shards

$currentKey = "ratelimit:user:123:current";

$previousKey = "ratelimit:user:123:previous";

// With hash tag: both guaranteed on same shard

$currentKey = "{ratelimit:user:123}:current";

$previousKey = "{ratelimit:user:123}:previous";

The curly braces tell Redis Cluster that only the content inside them determines the hash slot.

Multi-Layer Rate Limiting

For production systems, apply rate limits at multiple layers:

Request

↓

[CDN / Edge] ← IP-based rate limiting (very coarse, blocks floods)

↓

[Load Balancer] ← Connection-rate limiting

↓

[API Gateway] ← API key based, per-endpoint limits

↓

[Application] ← User-level, business-logic aware limits

Each layer catches a different class of abuse. The CDN handles volumetric attacks before they reach your servers. The application layer enforces business rules.

Response Headers

Always return rate limit information in response headers so clients can implement intelligent backoff:

X-RateLimit-Limit: 1000

X-RateLimit-Remaining: 742

X-RateLimit-Reset: 1743930000

Retry-After: 47

The Retry-After header (returned on 429 responses) tells clients exactly when they can retry. Clients that respect this header will automatically recover without hammering your retry logic.

public function handle(Request $request, Closure $next): Response

{

$key = 'api:' . $request->user()->id;

$result = $this->limiter->check($key, limit: 1000, windowSeconds: 3600);

if (!$result->allowed) {

return response()->json(['error' => 'Rate limit exceeded'], 429)

->withHeaders([

'X-RateLimit-Limit' => 1000,

'X-RateLimit-Remaining' => 0,

'X-RateLimit-Reset' => $result->resetAt,

'Retry-After' => $result->retryAfter,

]);

}

return $next($request)->withHeaders([

'X-RateLimit-Limit' => 1000,

'X-RateLimit-Remaining' => $result->remaining,

'X-RateLimit-Reset' => $result->resetAt,

]);

}

Choosing the Right Algorithm

| Algorithm | Memory | Accuracy | Burst Support | Best For |

|---|---|---|---|---|

| Fixed Window | O(1) | Low (boundary attack) | Yes (doubles at boundary) | Internal tools, simple cases |

| Sliding Window Log | O(requests) | Exact | No | Low-volume, security-critical |

| Sliding Window Counter | O(1) | ~99.9% | Partial | General-purpose APIs |

| Token Bucket | O(1) | Exact | Yes (controlled) | APIs needing burst tolerance |

For most production APIs, sliding window counter or token bucket is the right choice. Sliding window counter is simpler to implement correctly. Token bucket is better when you want to explicitly model and communicate burst allowances.

Tackling complex architecture decisions? We help teams build systems that last. scopeforged.com