Performance testing validates that applications meet speed, scalability, and stability requirements under expected and stress conditions. Without performance testing, you discover problems in production—the worst time to learn your system can't handle Black Friday traffic or that a memory leak crashes servers after a week.

Performance testing isn't just about finding slow code. It's about understanding system behavior under load, identifying bottlenecks before they cause outages, and gaining confidence that deployments won't degrade user experience.

Types of Performance Tests

Load testing measures system behavior under expected load. How does the application perform with 1,000 concurrent users doing typical operations? Load tests verify that the system meets performance requirements under normal conditions.

Stress testing pushes beyond expected load to find breaking points. At what load does response time become unacceptable? When do errors start appearing? Stress tests reveal system limits and failure modes.

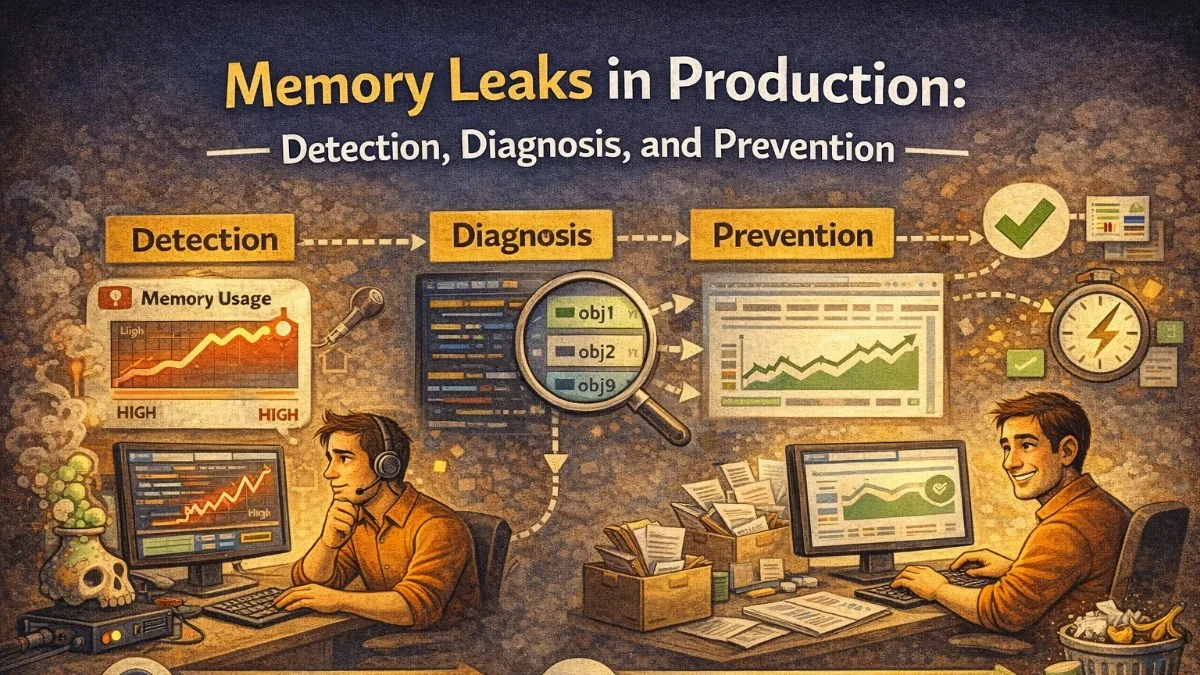

Endurance testing (soak testing) runs sustained load over extended periods. Memory leaks, connection pool exhaustion, and resource accumulation might not appear in short tests but emerge over hours or days.

Spike testing measures response to sudden load changes. Can the system handle a traffic surge from a marketing campaign? Does it recover gracefully when the spike subsides?

// k6 load test script

import http from 'k6/http';

import { check, sleep } from 'k6';

export const options = {

scenarios: {

// Load test: ramp to expected traffic

load_test: {

executor: 'ramping-vus',

startVUs: 0,

stages: [

{ duration: '2m', target: 100 }, // Ramp up

{ duration: '5m', target: 100 }, // Sustain

{ duration: '2m', target: 0 }, // Ramp down

],

},

// Stress test: find breaking point

stress_test: {

executor: 'ramping-vus',

startVUs: 0,

stages: [

{ duration: '2m', target: 100 },

{ duration: '5m', target: 200 },

{ duration: '5m', target: 300 },

{ duration: '5m', target: 400 },

{ duration: '2m', target: 0 },

],

startTime: '10m', // Run after load test

},

},

thresholds: {

http_req_duration: ['p(95)<500'], // 95% under 500ms

http_req_failed: ['rate<0.01'], // <1% error rate

},

};

export default function () {

const response = http.get('https://api.example.com/products');

check(response, {

'status is 200': (r) => r.status === 200,

'response time OK': (r) => r.timings.duration < 500,

});

sleep(1); // Think time between requests

}

Realistic Test Scenarios

Tests should reflect real user behavior. Single-endpoint tests miss the complexity of actual usage patterns.

// Realistic user journey

import http from 'k6/http';

import { check, group, sleep } from 'k6';

export default function () {

// Simulate complete user journey

group('Browse Products', function () {

const products = http.get('https://api.example.com/products');

check(products, { 'products loaded': (r) => r.status === 200 });

sleep(2); // User browses

const productId = JSON.parse(products.body).data[0].id;

const details = http.get(`https://api.example.com/products/${productId}`);

check(details, { 'details loaded': (r) => r.status === 200 });

sleep(3); // User reads details

});

group('Add to Cart', function () {

const response = http.post('https://api.example.com/cart/items', JSON.stringify({

product_id: 'prod_123',

quantity: 2,

}), {

headers: { 'Content-Type': 'application/json' },

});

check(response, { 'added to cart': (r) => r.status === 201 });

sleep(1);

});

group('Checkout', function () {

const cart = http.get('https://api.example.com/cart');

check(cart, { 'cart loaded': (r) => r.status === 200 });

sleep(2);

const order = http.post('https://api.example.com/orders', JSON.stringify({

payment_method: 'card',

shipping_address: { /* ... */ },

}), {

headers: { 'Content-Type': 'application/json' },

});

check(order, { 'order created': (r) => r.status === 201 });

});

}

Use production traffic patterns to inform test design. Analyze logs to understand user journeys, popular endpoints, and traffic distribution.

// Analyze production traffic for test design

class TrafficAnalyzer

{

public function getEndpointDistribution(): array

{

$logs = AccessLog::query()

->where('created_at', '>', now()->subDays(7))

->selectRaw('path, COUNT(*) as count')

->groupBy('path')

->orderByDesc('count')

->get();

$total = $logs->sum('count');

return $logs->map(fn ($log) => [

'path' => $log->path,

'percentage' => round($log->count / $total * 100, 2),

])->toArray();

}

}

Test Environment

Performance test environments should mirror production as closely as possible. Differences in hardware, network, data volume, or configuration produce misleading results.

# Performance test environment infrastructure

apiVersion: apps/v1

kind: Deployment

metadata:

name: api

namespace: performance-test

spec:

replicas: 3 # Match production replica count

template:

spec:

containers:

- name: api

image: myapp/api:latest

resources:

requests:

cpu: "2" # Match production resources

memory: "4Gi"

limits:

cpu: "4"

memory: "8Gi"

Test data matters. An empty database performs differently than one with millions of records. Seed test environments with representative data volumes.

// Generate realistic test data

class PerformanceTestSeeder extends Seeder

{

public function run(): void

{

// Match production data volumes

User::factory()->count(100_000)->create();

Product::factory()->count(50_000)->create();

Order::factory()->count(1_000_000)->create();

// Add realistic indexes and statistics

DB::statement('ANALYZE');

}

}

Metrics and Thresholds

Define clear success criteria before testing. Without thresholds, results are just numbers without meaning.

export const options = {

thresholds: {

// Response time thresholds

http_req_duration: [

'p(50)<200', // Median under 200ms

'p(95)<500', // 95th percentile under 500ms

'p(99)<1000', // 99th percentile under 1s

],

// Error rate threshold

http_req_failed: ['rate<0.001'], // Less than 0.1% errors

// Throughput threshold

http_reqs: ['rate>100'], // At least 100 requests/second

// Custom metric thresholds

'http_req_duration{name:checkout}': ['p(95)<1000'],

'http_req_duration{name:search}': ['p(95)<300'],

},

};

Track both client-side and server-side metrics. Load testing tools measure from outside; application monitoring reveals internal bottlenecks.

// Capture server-side metrics during load tests

class PerformanceMetricsMiddleware

{

public function handle(Request $request, Closure $next): Response

{

$start = microtime(true);

$memoryBefore = memory_get_usage();

$response = $next($request);

$duration = (microtime(true) - $start) * 1000;

$memoryUsed = memory_get_usage() - $memoryBefore;

// Record to time-series database

Metrics::histogram('http_request_duration_ms', $duration, [

'method' => $request->method(),

'route' => $request->route()?->getName(),

'status' => $response->status(),

]);

Metrics::gauge('http_request_memory_bytes', $memoryUsed);

return $response;

}

}

Analyzing Results

Raw numbers need context. Compare results across test runs, correlate with monitoring data, and investigate anomalies.

// Performance test result analysis

class PerformanceAnalyzer

{

public function compareRuns(array $currentRun, array $baselineRun): array

{

$comparison = [];

foreach ($currentRun['metrics'] as $metric => $value) {

$baseline = $baselineRun['metrics'][$metric] ?? null;

$comparison[$metric] = [

'current' => $value,

'baseline' => $baseline,

'change' => $baseline ? (($value - $baseline) / $baseline) * 100 : null,

'regression' => $baseline && $value > $baseline * 1.1, // >10% worse

];

}

return [

'comparison' => $comparison,

'regressions' => array_filter($comparison, fn ($m) => $m['regression']),

'passed' => empty(array_filter($comparison, fn ($m) => $m['regression'])),

];

}

}

Investigate outliers. A few slow requests might indicate specific code paths or data patterns that struggle under load.

CI/CD Integration

Automate performance testing to catch regressions before deployment.

# GitHub Actions performance test

name: Performance Test

on:

pull_request:

branches: [main]

jobs:

performance:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Deploy test environment

run: kubectl apply -f k8s/performance-test/

- name: Wait for deployment

run: kubectl rollout status deployment/api -n performance-test

- name: Run k6 tests

uses: grafana/k6-action@v0.3.1

with:

filename: tests/performance/load-test.js

flags: --out influxdb=http://influxdb:8086/k6

- name: Check thresholds

run: |

if [ -f k6-summary.json ]; then

python scripts/check-thresholds.py k6-summary.json

fi

- name: Upload results

uses: actions/upload-artifact@v3

with:

name: performance-results

path: k6-summary.json

Common Pitfalls

Testing from a single location misses network latency variations. Distributed load testing reveals realistic global performance.

Ignoring warm-up skews results. JIT compilation, connection pools, and caches need warm-up before measuring steady-state performance.

export const options = {

scenarios: {

warmup: {

executor: 'constant-vus',

vus: 10,

duration: '2m',

tags: { phase: 'warmup' },

},

test: {

executor: 'constant-vus',

vus: 100,

duration: '10m',

startTime: '2m', // Start after warmup

tags: { phase: 'test' },

},

},

};

Testing only happy paths misses error handling costs. Include scenarios that trigger validation errors, authentication failures, and edge cases.

Conclusion

Performance testing is an investment in reliability. Load tests verify expected performance. Stress tests find limits. Endurance tests catch slow leaks. Spike tests validate elasticity.

Design realistic scenarios based on production traffic patterns. Mirror production environments as closely as possible. Define clear thresholds before testing. Integrate performance testing into CI/CD to catch regressions early.

The goal isn't perfect performance—it's understood performance. Know your system's capabilities and limits before production reveals them painfully.