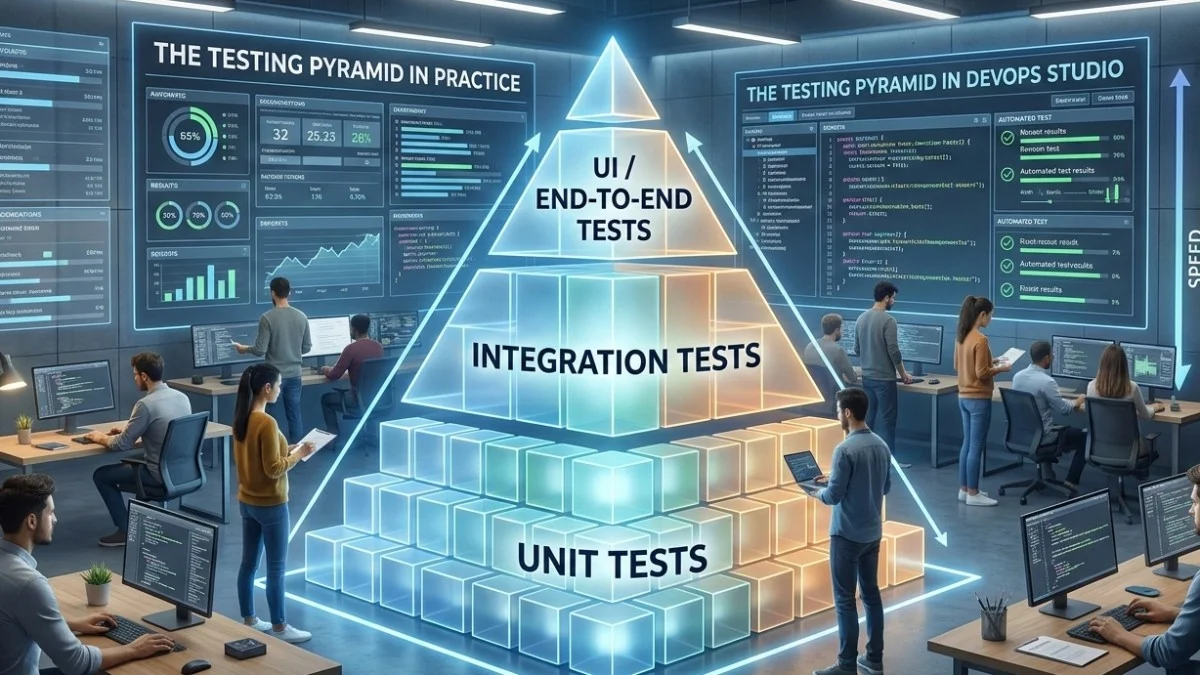

Why the Testing Pyramid?

The testing pyramid is one of software engineering's most referenced concepts and one of its most misapplied. Mike Cohn introduced it in 2009, and teams have been arguing about it ever since. The premise is simple: you should have more unit tests than integration tests, and more integration tests than end-to-end tests.

But how many is "more"? And what counts as each type? Let's get specific.

The Three Layers Defined

Before counting tests, you need agreement on what each layer means.

Unit tests test a single class or function in isolation. Dependencies are replaced with mocks or stubs. They run in milliseconds. A unit test should never touch a database, filesystem, or network.

Integration tests test multiple components working together. This includes database queries, file operations, and service interactions within your own codebase. They run in seconds.

End-to-end tests simulate a real user interacting with your system through the UI or API surface. They run in minutes and require a fully running system.

Actual Ratios That Work

Here's the uncomfortable truth: there is no universal ratio. The right distribution depends on your architecture. That said, here are starting points based on codebase type.

CRUD-heavy applications (most web apps):

- 40% unit tests

- 50% integration tests

- 10% E2E tests

Why more integration? Because most of the logic is in database interactions and service orchestration. A unit test that mocks the database doesn't tell you much in a CRUD app.

Domain-rich applications (complex business rules, workflows, calculations):

- 60% unit tests

- 30% integration tests

- 10% E2E tests

When logic lives in domain objects and services, unit tests provide high value. They're fast, pinpoint failures precisely, and directly document business rules.

API-first services (backends serving multiple clients):

- 30% unit tests

- 60% integration tests

- 10% E2E tests (contract tests instead)

Here, integration tests against the API surface ensure backward compatibility. Contract testing often replaces E2E entirely.

The Right Unit Tests to Write

Not all code deserves unit tests. The highest-value unit tests cover:

- Calculation logic: pricing engines, tax calculations, discount stacking

- State machines: order workflows, approval processes

- Validation rules: business rules beyond "field required"

- Data transformations: formatters, parsers, converters

- Edge cases: what happens at zero, at max, with null

Low-value unit tests that teams often waste time on:

- Testing that Laravel's ORM saves a record (it does; trust the framework)

- Testing getters and setters with no logic

- Testing third-party library behavior

Here's a high-value unit test for an invoice calculator:

class InvoiceTotalCalculatorTest extends TestCase

{

public function test_applies_volume_discount_at_threshold(): void

{

$calculator = new InvoiceTotalCalculator();

$items = [

new LineItem(quantity: 100, unit_price: 10.00),

];

$result = $calculator->calculate($items, discount_threshold: 500.00);

// 100 * 10 = 1000, qualifies for 10% volume discount

$this->assertEquals(900.00, $result->total);

$this->assertEquals(100.00, $result->discount_applied);

}

public function test_no_discount_below_threshold(): void

{

$calculator = new InvoiceTotalCalculator();

$items = [

new LineItem(quantity: 10, unit_price: 10.00),

];

$result = $calculator->calculate($items, discount_threshold: 500.00);

// 10 * 10 = 100, below threshold

$this->assertEquals(100.00, $result->total);

$this->assertEquals(0.00, $result->discount_applied);

}

}

This test verifies real logic. The threshold, the discount rate, and the boundary behavior are all meaningful decisions worth testing.

The Right Integration Tests to Write

Integration tests verify components interact correctly. In a Laravel application, the highest-value integration tests are:

Repository and query tests: Does your eager loading work? Are your scopes returning the right records? Does pagination work correctly?

class ProjectRepositoryTest extends TestCase

{

use RefreshDatabase;

public function test_eager_loads_milestones_and_client(): void

{

$project = Project::factory()

->has(ProjectMilestone::factory()->count(3))

->for(Client::factory())

->create();

$loaded = (new ProjectRepository())->findWithRelations($project->id);

$this->assertTrue($loaded->relationLoaded('milestones'));

$this->assertTrue($loaded->relationLoaded('client'));

$this->assertCount(3, $loaded->milestones);

}

}

API endpoint tests: Do your controllers return the right shapes? Do authorization rules apply? Do validation errors return the right status codes?

public function test_returns_403_when_client_accesses_other_clients_project(): void

{

$client = User::factory()->client()->create();

$otherProject = Project::factory()->create(); // belongs to different client

$response = $this->actingAs($client)

->getJson("/api/projects/{$otherProject->id}");

$response->assertStatus(403);

}

The Right E2E Tests to Write

E2E tests are expensive: slow to run, brittle to maintain, hard to debug. Reserve them for workflows that:

- Cross multiple subsystems (auth + billing + notifications)

- Involve JavaScript-driven behavior that HTTP tests can't catch

- Are catastrophic if broken (signup, payment, data export)

A good E2E test suite for a SaaS might include:

- User signs up and receives confirmation email

- User creates a project and invites a team member

- Team member accepts invite and sees the project

- Admin views the project in the admin panel

- Invoice is generated and sent

- Client pays the invoice

That's 6 tests covering the most critical user journeys. Not 600.

Identifying Gaps in Your Pyramid

Run your test suite with coverage analysis:

XDEBUG_MODE=coverage php artisan test --coverage-html=coverage-report

Then look for:

Uncovered branches: Not just lines, but logical branches. An if/else might have the if covered but the else never executed.

Missing error paths: Tests often cover happy paths but not failure scenarios. What happens when the payment gateway is down? When a file is too large?

Missing boundary tests: Code at boundaries often has bugs. Test at zero, at maximum, at the exact threshold.

The Test Trophy Alternative

Kent C. Dodds popularized the "testing trophy" as an alternative to the pyramid. It looks like:

- Few E2E tests (top)

- Many integration tests (widest part)

- Some unit tests (narrower middle)

- Static analysis (base)

The trophy acknowledges that in many modern applications, integration tests provide the best confidence-to-cost ratio. A test that hits a real database and tests a real query catches more real bugs than a unit test with a mocked repository.

Neither shape is wrong. The pyramid was designed when E2E tests ran in browsers on real networks. When you can run hundreds of database integration tests in 30 seconds with SQLite, the math changes.

Metrics to Track

Instead of aiming for a specific ratio, track these metrics:

Time to run: Your full test suite should run in under 10 minutes in CI. If it's longer, you have a problem regardless of the ratio.

Flakiness rate: Tests that sometimes pass and sometimes fail are worse than no tests. Track and fix flaky tests aggressively.

Confidence score: Would you deploy to production without running tests? If yes, your tests aren't providing value.

Building Your Pyramid Over Time

Don't try to build the perfect pyramid from day one. Start with what provides immediate value:

- Write unit tests for all new business logic

- Write integration tests for all new API endpoints

- Write E2E tests for critical user journeys before launch

- Add regression tests for every bug you fix

- Gradually fill coverage gaps in existing code

The pyramid builds itself if you're disciplined about testing new code. Retrofitting tests into untested legacy code is valuable but should be balanced against new feature development.

Practical Takeaways

- Unit test business logic, not framework glue

- Integration test service interactions and API endpoints

- E2E test only critical user journeys

- Track test suite speed and flakiness, not just coverage percentage

- Your architecture should inform your ratio, not a diagram from 2009

Need help building reliable systems? We help teams architect software that scales. scopeforged.com