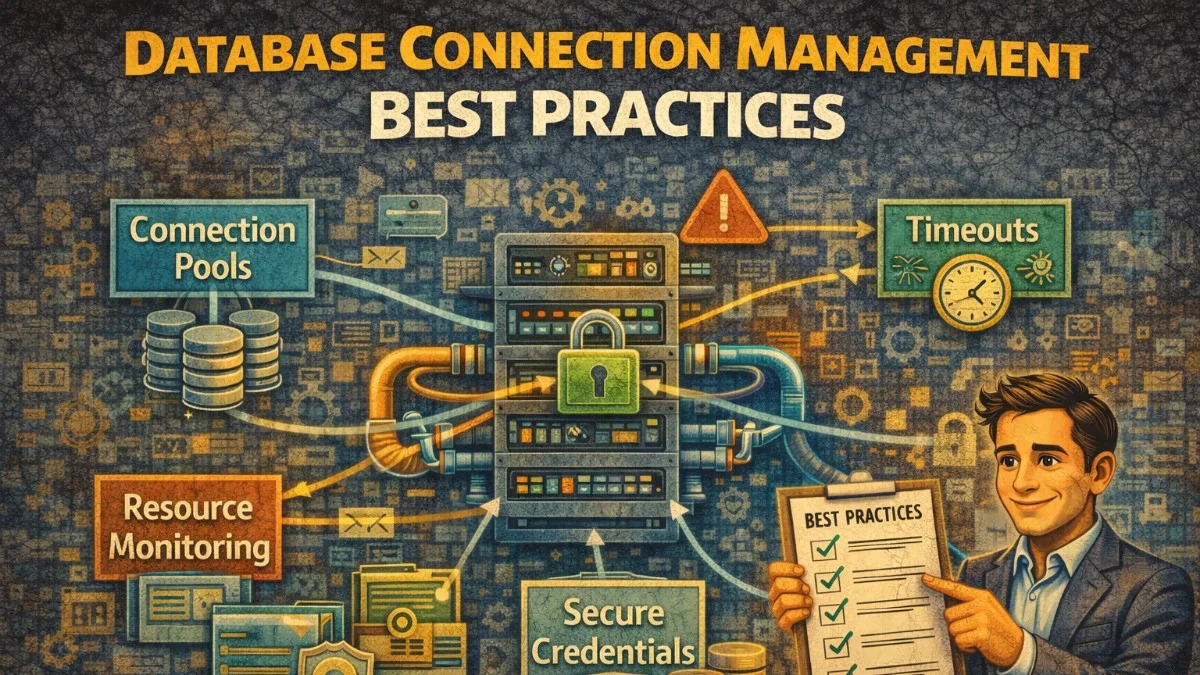

Database connection management affects application performance, reliability, and scalability. Connections are expensive resources; creating them involves network round-trips, authentication, and memory allocation on both client and server. Poor connection management leads to exhausted connection pools, slow responses, and database server overload.

Understanding connection lifecycle, pooling strategies, and common problems helps you build applications that use database resources efficiently. The right approach depends on your application architecture, database system, and traffic patterns.

Connection Lifecycle

A database connection involves multiple steps: TCP handshake, TLS negotiation (if encrypted), authentication, session initialization, and protocol negotiation. This process takes tens of milliseconds, making connection creation expensive for request-response applications.

// Naive approach: new connection per query

// DON'T DO THIS

class SlowRepository

{

public function findUser(int $id): ?User

{

// Creates new connection, runs query, closes connection

// Repeated for every query

$pdo = new PDO($dsn, $user, $password);

$stmt = $pdo->prepare('SELECT * FROM users WHERE id = ?');

$stmt->execute([$id]);

return $stmt->fetch();

// Connection closed when $pdo goes out of scope

}

}

Reusing connections amortizes creation cost across many queries. This is why connection pooling exists.

Connection Pooling

Connection pools maintain a set of open connections ready for use. Instead of creating connections, code borrows from the pool and returns after use. The pool manages connection lifecycle, including creation, validation, and cleanup.

Laravel's database manager handles pooling for web requests:

// Laravel reuses connections within a request

class UserController extends Controller

{

public function show(int $id): Response

{

// These queries share a connection

$user = DB::table('users')->find($id);

$orders = DB::table('orders')->where('user_id', $id)->get();

$stats = DB::table('stats')->where('user_id', $id)->first();

return response()->json(compact('user', 'orders', 'stats'));

}

// Connection returned to pool when request ends

}

For long-running processes like queue workers, connections need explicit management:

class LongRunningJob implements ShouldQueue

{

public function handle(): void

{

foreach ($this->items as $item) {

$this->processItem($item);

// Periodically reconnect to avoid stale connections

if ($this->shouldReconnect()) {

DB::reconnect();

}

}

}

private function shouldReconnect(): bool

{

// Reconnect every 100 iterations or if connection is stale

return $this->iterationCount % 100 === 0

|| !$this->isConnectionHealthy();

}

private function isConnectionHealthy(): bool

{

try {

DB::select('SELECT 1');

return true;

} catch (Exception $e) {

return false;

}

}

}

Pool Configuration

Pool size determines how many concurrent connections exist. Too few connections cause queuing; too many overwhelm the database.

// config/database.php

'mysql' => [

'driver' => 'mysql',

'host' => env('DB_HOST'),

'database' => env('DB_DATABASE'),

'username' => env('DB_USERNAME'),

'password' => env('DB_PASSWORD'),

// PDO connection options

'options' => [

PDO::ATTR_PERSISTENT => false, // Persistent connections

PDO::ATTR_TIMEOUT => 5, // Connection timeout

PDO::ATTR_ERRMODE => PDO::ERRMODE_EXCEPTION,

],

],

For connection poolers like PgBouncer or ProxySQL, configure pool sizes at the pooler:

# pgbouncer.ini

[databases]

myapp = host=localhost port=5432 dbname=myapp

[pgbouncer]

pool_mode = transaction

default_pool_size = 20

max_client_conn = 100

reserve_pool_size = 5

reserve_pool_timeout = 3

Pool modes affect behavior:

- Session pooling assigns connections for the entire client session

- Transaction pooling assigns connections per transaction

- Statement pooling assigns connections per statement

Transaction pooling provides the best connection efficiency but prevents session-level features like prepared statements or temporary tables.

Connection Limits

Databases have maximum connection limits. PostgreSQL defaults to 100. MySQL defaults to 151. Exceeding limits causes connection failures.

// Monitor connection usage

class ConnectionMonitor

{

public function getConnectionStats(): array

{

// PostgreSQL

$stats = DB::select("

SELECT

count(*) as total_connections,

count(*) FILTER (WHERE state = 'active') as active,

count(*) FILTER (WHERE state = 'idle') as idle,

max_conn.setting::int as max_connections

FROM pg_stat_activity

CROSS JOIN pg_settings max_conn

WHERE max_conn.name = 'max_connections'

GROUP BY max_conn.setting

");

return [

'used' => $stats[0]->total_connections,

'active' => $stats[0]->active,

'idle' => $stats[0]->idle,

'max' => $stats[0]->max_connections,

'utilization' => $stats[0]->total_connections / $stats[0]->max_connections,

];

}

}

Scale horizontally with read replicas rather than increasing connection limits. Each application instance consumes connections; multiply instances by pool size to estimate total connection needs.

// Route read queries to replica

class UserRepository

{

public function find(int $id): ?User

{

// Read from replica

return User::on('mysql_read')->find($id);

}

public function save(User $user): void

{

// Write to primary

$user->setConnection('mysql_write');

$user->save();

}

}

Connection Timeouts

Timeouts prevent indefinite waits for unavailable databases. Configure appropriate timeouts at multiple levels.

// PDO connection timeout

'options' => [

PDO::ATTR_TIMEOUT => 5, // Wait max 5 seconds to connect

],

// Query timeout (MySQL)

'options' => [

PDO::MYSQL_ATTR_READ_TIMEOUT => 30,

PDO::MYSQL_ATTR_WRITE_TIMEOUT => 30,

],

Handle timeout exceptions gracefully:

class ResilientRepository

{

public function findWithFallback(int $id): ?User

{

try {

return User::find($id);

} catch (QueryException $e) {

if ($this->isTimeoutException($e)) {

Log::warning('Database timeout, using cache fallback', [

'user_id' => $id,

]);

return Cache::get("user:$id");

}

throw $e;

}

}

private function isTimeoutException(QueryException $e): bool

{

// MySQL timeout error code

return str_contains($e->getMessage(), 'timed out')

|| $e->getCode() === 2006; // MySQL server has gone away

}

}

Connection Health

Connections can become stale due to network issues, server restarts, or idle timeouts. Validate connections before use.

class HealthyConnectionManager

{

public function ensureHealthyConnection(): void

{

try {

// Lightweight query to test connection

DB::select('SELECT 1');

} catch (Exception $e) {

Log::info('Reconnecting due to unhealthy connection');

DB::reconnect();

}

}

}

// In queue worker

class BaseJob implements ShouldQueue

{

public function handle(): void

{

// Ensure healthy connection before processing

app(HealthyConnectionManager::class)->ensureHealthyConnection();

$this->process();

}

}

Connection poolers can perform health checks automatically:

# pgbouncer health check

server_check_query = SELECT 1

server_check_delay = 30

Multiple Database Connections

Applications often connect to multiple databases. Configure each connection appropriately.

// config/database.php

'connections' => [

'primary' => [

'driver' => 'mysql',

'host' => env('DB_PRIMARY_HOST'),

'database' => env('DB_DATABASE'),

// ...

],

'analytics' => [

'driver' => 'mysql',

'host' => env('DB_ANALYTICS_HOST'),

'database' => env('DB_ANALYTICS_DATABASE'),

// Different pool settings for analytics

'options' => [

PDO::ATTR_TIMEOUT => 30, // Analytics queries take longer

],

],

'legacy' => [

'driver' => 'sqlsrv',

'host' => env('LEGACY_DB_HOST'),

// ...

],

],

Use connections explicitly:

// Query specific connection

$reports = DB::connection('analytics')

->table('daily_reports')

->get();

// Model with specific connection

class LegacyCustomer extends Model

{

protected $connection = 'legacy';

protected $table = 'tblCustomers';

}

Debugging Connection Issues

Common connection problems and their symptoms:

Connection exhaustion manifests as "too many connections" errors. Monitor pool utilization and reduce leaks.

// Find connection leaks

class ConnectionDebugger

{

public function logConnectionUsage(): void

{

// PostgreSQL: show connection sources

$connections = DB::select("

SELECT

application_name,

client_addr,

state,

count(*) as count

FROM pg_stat_activity

WHERE datname = current_database()

GROUP BY application_name, client_addr, state

ORDER BY count DESC

");

Log::debug('Connection usage', ['connections' => $connections]);

}

}

Slow connections may indicate network issues or overloaded servers. Log connection times:

DB::listen(function ($query) {

if ($query->time > 1000) {

Log::warning('Slow query detected', [

'sql' => $query->sql,

'time' => $query->time,

'connection' => $query->connectionName,

]);

}

});

Conclusion

Database connection management significantly impacts application performance and reliability. Reuse connections through pooling. Configure pool sizes based on workload. Use connection poolers for high-concurrency applications. Monitor connection usage and set appropriate timeouts.

Good connection management is invisible when working correctly. Poor management causes cascading failures under load. Invest time in proper configuration and monitoring; it pays dividends when traffic spikes or databases struggle.