Most distributed systems are designed to survive the failure of individual components: a server goes down, the load balancer routes around it. But there's a class of failures these designs can't protect against: the correlated failure that takes down everything at once.

A bad database migration. A memory leak triggered by a specific traffic pattern. A dependency returning malformed data that causes cascading crashes across your entire fleet. When these happen in a shared-everything architecture, all your users feel it simultaneously.

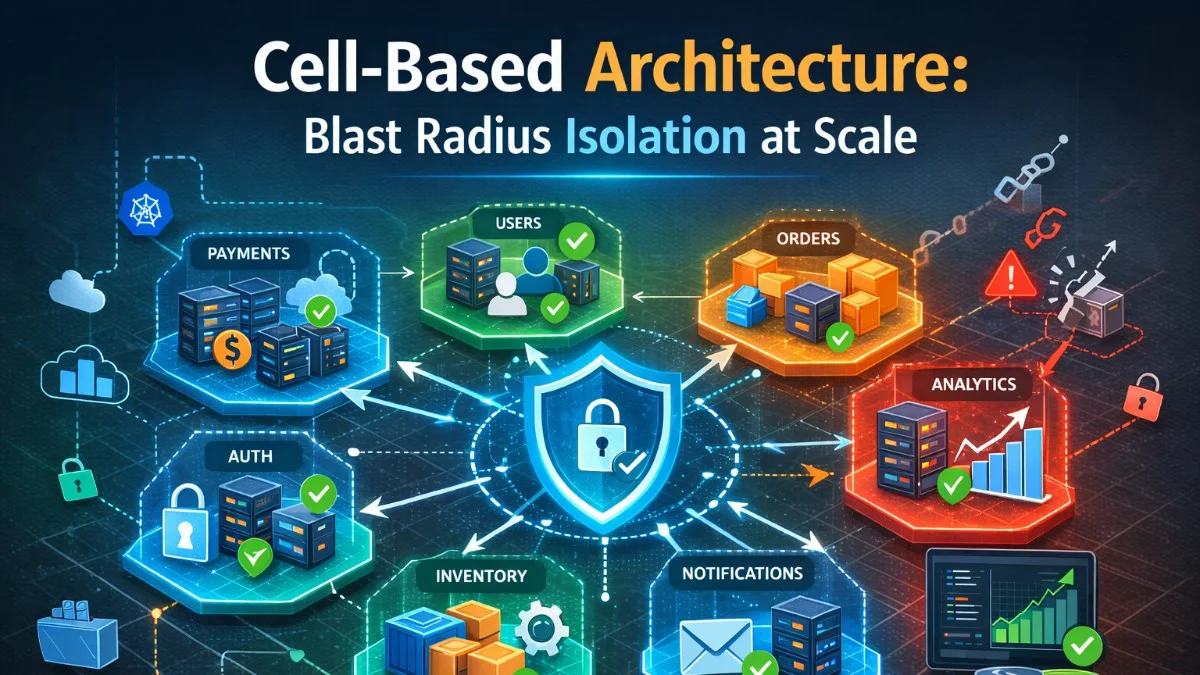

Cell-based architecture limits this. By partitioning your system into independent cells, each with its own compute, storage, and dependencies, you guarantee that most failures stay confined to one cell. The users in the other cells never notice.

What Is a Cell?

A cell is a fully independent, self-contained copy of your system's stack. It has its own:

- Application servers / containers

- Database (or database cluster)

- Cache layer

- Message queues

- Internal service dependencies

Cells do not share stateful resources. They may share read-only configuration or public data, but no state that would cause a failure in one cell to propagate to another.

┌─────────────────────────────┐

│ Global Router │

│ (assigns tenants to cells) │

└──────────┬──────────────────┘

│

┌───────────────────┼────────────────────┐

▼ ▼ ▼

┌─────────────┐ ┌─────────────┐ ┌─────────────┐

│ Cell A │ │ Cell B │ │ Cell C │

│ │ │ │ │ │

│ App servers │ │ App servers │ │ App servers │

│ Database │ │ Database │ │ Database │

│ Cache │ │ Cache │ │ Cache │

│ Queue │ │ Queue │ │ Queue │

└─────────────┘ └─────────────┘ └─────────────┘

Tenants: 1-1000 Tenants: 1001-2000 Tenants: 2001-3000

The global router is the only shared component. It knows which tenants belong to which cell and directs their requests accordingly. It is kept intentionally simple and stateless to minimize its own failure surface.

The Blast Radius Problem

Blast radius refers to how much of your system a single failure can impact. In a traditional horizontally scaled architecture, every component can potentially affect every user.

Consider a SaaS application with 10,000 tenants running on a shared database cluster:

- A slow query from one tenant degrades the database for all tenants

- A schema migration gone wrong takes down the database for everyone

- A bug triggered by one tenant's data pattern can cascade to cause OOM crashes across the fleet

- A Redis keyspace collision between tenants causes incorrect cache reads

With cell-based architecture, none of these scenarios affects more than 1/N of your users, where N is the number of cells.

Amazon Web Services, Slack, DoorDash, and Shopify have all publicly discussed using cell-based architectures for exactly this reason. AWS describes the pattern as "shuffle sharding" in their availability design documentation.

Cell Assignment Strategies

Tenant-Based Assignment

The most common approach for SaaS: each tenant is permanently assigned to a cell at signup. All their traffic goes to that cell.

class TenantRouter:

def __init__(self, cell_registry):

self.cell_registry = cell_registry

def get_cell(self, tenant_id: str) -> Cell:

"""Look up which cell this tenant belongs to."""

assignment = self.cell_registry.get_assignment(tenant_id)

if not assignment:

raise TenantNotFoundError(f"No cell assignment for tenant {tenant_id}")

return assignment.cell

def assign_new_tenant(self, tenant_id: str) -> Cell:

"""Assign a new tenant to the cell with the most capacity."""

target_cell = self.select_cell_for_new_tenant()

self.cell_registry.create_assignment(tenant_id, target_cell)

return target_cell

def select_cell_for_new_tenant(self) -> Cell:

"""Select the cell with the lowest current load."""

cells = self.cell_registry.get_all_cells()

return min(cells, key=lambda c: c.current_tenant_count)

The cell registry is typically stored in a database that is read at request routing time and heavily cached. The router should be able to serve cell lookups from cache for nearly all requests.

Geographic Assignment

For data-residency requirements or latency optimization, assign tenants to cells based on geography:

GEO_CELL_MAP = {

'EU': ['cell-eu-west-1', 'cell-eu-west-2'],

'US': ['cell-us-east-1', 'cell-us-west-1'],

'APAC': ['cell-ap-southeast-1'],

}

def assign_by_geo(tenant_id: str, tenant_country: str) -> str:

region = get_region_for_country(tenant_country)

available_cells = GEO_CELL_MAP.get(region, GEO_CELL_MAP['US'])

# Pick cell with lowest load within the geo

return min(available_cells, key=lambda c: get_cell_load(c))

This also simplifies GDPR compliance: EU tenants' data never leaves EU cells.

Hash-Based Assignment

For systems without an explicit tenant concept (e.g., user-level partitioning), a consistent hash ring assigns each user to a cell deterministically:

import hashlib

def get_cell_for_user(user_id: str, cells: list) -> str:

"""Deterministically assign user to a cell using consistent hashing."""

hash_value = int(hashlib.md5(user_id.encode()).hexdigest(), 16)

return cells[hash_value % len(cells)]

Consistent hashing means adding a new cell only moves a predictable fraction of users rather than remapping everyone.

The Cell Router

The router is the most critical component. It must be:

- Fast: Adds latency to every request. Target under 5ms.

- Highly available: If the router fails, all cells are unreachable.

- Stateless: No stateful resources so it can scale horizontally without coordination.

A practical implementation uses an in-memory cache of the routing table, refreshed from a database on a short TTL:

class CellRouter

{

private array $routingTable = [];

private int $lastRefreshed = 0;

private const CACHE_TTL_SECONDS = 30;

public function route(Request $request): string

{

$tenantId = $this->extractTenantId($request);

$cell = $this->lookupCell($tenantId);

return $cell->getEndpoint();

}

private function lookupCell(string $tenantId): Cell

{

$this->refreshIfStale();

if (!isset($this->routingTable[$tenantId])) {

throw new UnknownTenantException($tenantId);

}

return $this->routingTable[$tenantId];

}

private function refreshIfStale(): void

{

if (time() - $this->lastRefreshed > self::CACHE_TTL_SECONDS) {

$assignments = DB::table('cell_assignments')

->select(['tenant_id', 'cell_id', 'cell_endpoint'])

->get();

$this->routingTable = $assignments->keyBy('tenant_id')

->map(fn ($row) => new Cell($row->cell_id, $row->cell_endpoint))

->toArray();

$this->lastRefreshed = time();

}

}

private function extractTenantId(Request $request): string

{

// From subdomain: tenant1.app.example.com

$host = $request->getHost();

if (preg_match('/^([^.]+)\.app\./', $host, $matches)) {

return $matches[1];

}

// From JWT or session

return $request->user()->tenant_id

?? throw new MissingTenantException();

}

}

Tenant Migration Between Cells

You'll eventually need to move a tenant from one cell to another: rebalancing load, decommissioning a cell, or moving a tenant to a higher-tier cell after an upgrade.

The migration process:

1. Snapshot tenant data from source cell

2. Restore snapshot into destination cell

3. Begin dual-write: writes go to both cells

4. Continuously replay any lag in the destination

5. Verify destination is fully caught up

6. Atomic cut-over: update routing table to destination

7. Stop dual-write, decommission data from source

class TenantMigrationService:

def migrate(self, tenant_id: str, source_cell: str, dest_cell: str):

# Phase 1: Seed initial data

snapshot = self.create_snapshot(tenant_id, source_cell)

self.restore_snapshot(snapshot, dest_cell)

# Phase 2: Live catch-up replication

replication = self.start_replication(tenant_id, source_cell, dest_cell)

self.wait_for_replication_lag_under(replication, threshold_seconds=5)

# Phase 3: Atomic cut-over

with self.pause_writes_for(tenant_id, timeout_seconds=10):

self.wait_for_replication_lag_under(replication, threshold_seconds=0)

self.cell_registry.update_assignment(tenant_id, dest_cell)

# Phase 4: Cleanup

replication.stop()

self.schedule_source_data_cleanup(tenant_id, source_cell)

self.logger.info(f"Migrated {tenant_id} from {source_cell} to {dest_cell}")

The pause-writes window is critical. Even a 1-second write pause ensures no data is written to the source after replication finishes, preventing data loss at cut-over.

Cell Health and Failover

Each cell should expose a health endpoint the router can check:

// GET /cell/health

public function health(): JsonResponse

{

$checks = [

'database' => $this->checkDatabase(),

'cache' => $this->checkCache(),

'queue' => $this->checkQueue(),

];

$healthy = collect($checks)->every(fn ($status) => $status === 'ok');

return response()->json([

'status' => $healthy ? 'healthy' : 'degraded',

'cell_id' => config('cell.id'),

'checks' => $checks,

'tenant_count' => Tenant::count(),

], $healthy ? 200 : 503);

}

If a cell becomes unhealthy, the router can stop sending new connections to it. For cells serving existing tenants, you generally don't auto-failover (that would require data migration under fire), but you can alert on-call and initiate a controlled tenant migration.

Operational Considerations

Deployment Strategy

Deploy new code to one cell at a time, starting with a canary cell. A bad deployment then affects only that cell's tenants:

# Deployment pipeline stages

stages:

- name: Deploy to Canary Cell

cell: cell-canary

pause_after: 30m

rollback_if_error_rate_exceeds: 1%

- name: Deploy to Cell A

cell: cell-a

pause_after: 15m

- name: Deploy to Cells B-Z

cells: [cell-b, cell-c, ...]

parallel: true

Database Management

Each cell has its own database, which means N times as many databases to manage. Automation is non-negotiable:

- Schema migrations must be applied to all cells, typically via a migration orchestrator

- Backups must cover every cell individually

- Monitoring must aggregate across cells while preserving per-cell visibility

Cost Implications

Cell-based architecture is not free. Each cell carries fixed overhead: a database instance, minimum compute, cache allocation. For a system with 10 cells, you're paying roughly 10x the fixed costs of a single-cell architecture.

This cost is justified when:

- A multi-tenant outage has significant SLA penalty or customer trust cost

- Compliance requires data isolation between tenants

- You're deploying frequently and need contained blast radius for deployments

- Your largest customers demand dedicated capacity

When to Adopt Cell-Based Architecture

This is a significant architectural investment. Adopt it when:

- You have contractual SLAs with meaningful penalties for broad outages

- A single outage would affect thousands of tenants simultaneously

- You need regulatory data isolation between customer segments

- Your deployment frequency creates meaningful rollout risk

Don't adopt it when:

- You're pre-product-market-fit and optimizing for iteration speed

- Your tenant count is small enough that a shared architecture is easily managed

- Your failure modes are well-understood and not correlated

Start with two or three cells. The operational complexity increases with cell count, so grow the cell count as your scale and requirements justify it.

Summary

Cell-based architecture replaces "all users experience every outage" with "only the users in the affected cell experience this outage." The cost is operational complexity and fixed resource overhead. The benefit is a hard ceiling on the blast radius of any failure.

For systems with strong availability requirements and large tenant populations, it's one of the most effective reliability investments you can make.

Tackling complex architecture decisions? We help teams build systems that last. scopeforged.com