Chaos engineering deliberately introduces failures into systems to discover weaknesses before they cause outages. Rather than waiting for production incidents, you proactively test resilience under controlled conditions.

Why Chaos Engineering?

The Problem with Traditional Testing

Traditional testing validates expected behavior, but production systems fail in unexpected ways. Unit and integration tests prove components work correctly, but they cannot simulate the complex failure modes that occur in distributed systems.

The following list contrasts what traditional testing covers with the reality of production failures. You will notice that the most challenging issues are precisely those that standard tests cannot catch.

Unit tests: Functions work in isolation ✓

Integration tests: Components work together ✓

Load tests: System handles expected load ✓

Production reality:

- Network partitions between services

- Database failover during peak traffic

- Third-party API rate limiting

- Memory leaks under specific conditions

- Cascading failures from a single component

Traditional tests don't catch these.

The Chaos Engineering Approach

Chaos engineering treats production resilience as a hypothesis to be tested. You start with an assumption about how your system should behave under failure, then run controlled experiments to validate that assumption.

This shows the scientific method applied to system resilience. You form a hypothesis, run an experiment, and either validate your assumption or discover a weakness to fix.

Hypothesis: "Our system can handle the loss of one database replica"

Experiment:

1. Establish steady state (normal metrics)

2. Terminate one replica

3. Observe system behavior

4. Compare to hypothesis

Result: Validated or weakness discovered

Core Principles

1. Build Hypothesis Around Steady State

Before you can test resilience, you need to define what normal looks like. Steady state is described by business metrics and system health indicators that should remain within acceptable bounds during experiments.

This class demonstrates how to codify your steady state definition. Each metric has bounds that define acceptable values. If any metric falls outside bounds during an experiment, something has gone wrong.

// Define what "normal" looks like

class SteadyStateDefinition

{

public function metrics(): array

{

return [

'error_rate' => ['max' => 0.01], // < 1%

'latency_p99' => ['max' => 500], // < 500ms

'throughput' => ['min' => 1000], // > 1000 req/s

'availability' => ['min' => 0.999], // > 99.9%

];

}

public function isHealthy(array $currentMetrics): bool

{

foreach ($this->metrics() as $metric => $bounds) {

if (isset($bounds['max']) && $currentMetrics[$metric] > $bounds['max']) {

return false;

}

if (isset($bounds['min']) && $currentMetrics[$metric] < $bounds['min']) {

return false;

}

}

return true;

}

}

These thresholds become your success criteria. If metrics stay within bounds during an experiment, your system is resilient to that failure mode.

2. Vary Real-World Events

Chaos experiments should simulate actual failure scenarios you might encounter. The more realistic the failure, the more confidence you gain from surviving it.

Common failure scenarios:

- Server crashes

- Network latency/partition

- Disk full

- CPU exhaustion

- Dependency failures

- Clock skew

- DNS failures

3. Run Experiments in Production

Staging environments differ from production in ways that matter. Traffic patterns, data volumes, and infrastructure configurations all affect how failures propagate.

Staging environment:

- Different traffic patterns

- Different data volumes

- Different infrastructure

- Findings may not apply to production

Production experiments:

- Real traffic

- Real data

- Real infrastructure

- Real findings

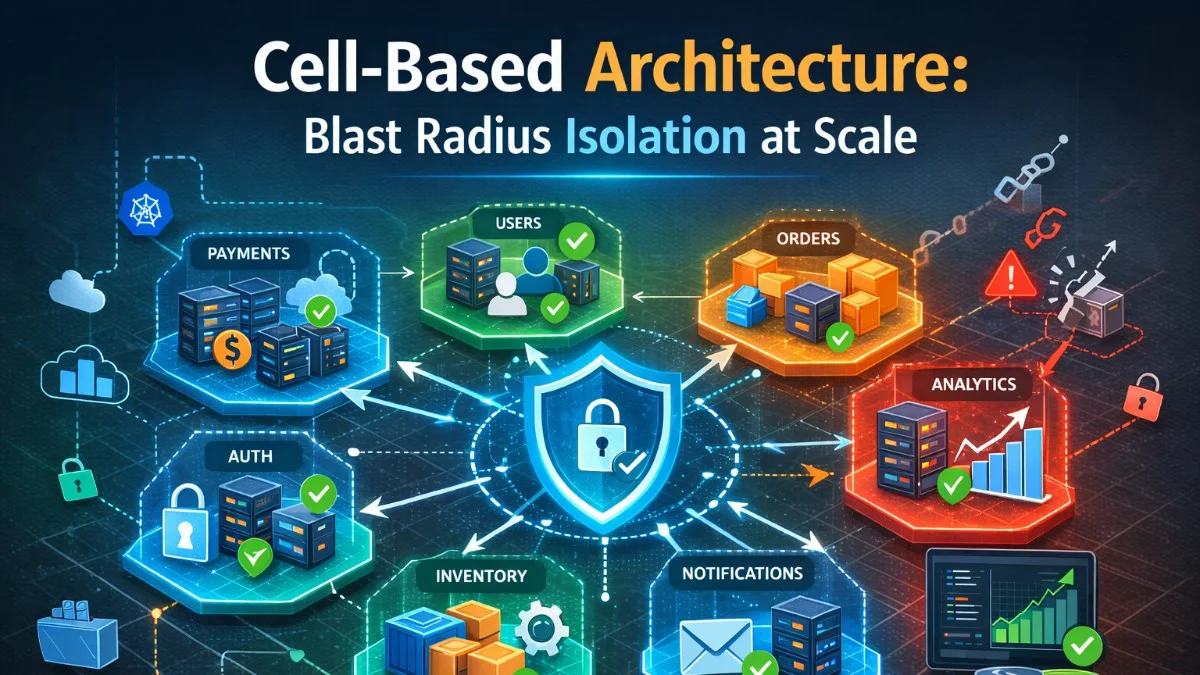

4. Minimize Blast Radius

Production experiments require careful controls. Start small and expand scope only after building confidence. Always have automated abort conditions and rollback procedures.

This configuration class shows how to limit experiment scope. You can target a small percentage of traffic, constrain to a single region, and define automatic abort conditions.

class ChaosExperiment

{

public function configure(): array

{

return [

// Limit scope

'target_percentage' => 5, // Only 5% of traffic

'target_region' => 'us-west-1', // Single region

// Set boundaries

'max_duration' => 300, // 5 minutes max

'abort_on_error_rate' => 0.05, // Stop if errors > 5%

// Define rollback

'auto_rollback' => true,

'rollback_trigger' => 'steady_state_violation',

];

}

}

The goal is to learn from controlled experiments, not to cause actual outages.

Tools

Chaos Monkey (Netflix)

Netflix pioneered chaos engineering with Chaos Monkey, which randomly terminates instances to ensure services can survive individual machine failures. This simple concept led to a suite of tools collectively called the Simian Army.

This configuration shows how to run Chaos Monkey in a controlled manner. You can limit the kill probability and restrict execution to business hours when engineers are available to respond.

# chaos-monkey.yaml

chaos_monkey:

enabled: true

probability: 0.1 # 10% chance per day

schedule:

start: "09:00"

end: "17:00" # Business hours only

groups:

- name: production-api

kill_probability: 0.05

Gremlin

Gremlin is a commercial platform that provides a wide range of chaos experiments with enterprise controls. It simplifies running experiments without building custom tooling.

These commands demonstrate Gremlin's attack capabilities. You can stress CPU, inject network latency, and target specific hosts to simulate realistic failure scenarios.

# Install Gremlin agent

curl https://gremlin.com/install.sh | bash

# Run CPU attack

gremlin attack cpu \

--length 60 \

--cores 2 \

--percent 80

# Network latency attack

gremlin attack network latency \

--length 60 \

--ms 500 \

--target-hosts "database.internal"

Litmus (Kubernetes-native)

For Kubernetes environments, Litmus provides chaos experiments as custom resources. Experiments are defined declaratively and can be integrated into GitOps workflows.

This ChaosEngine resource targets pods with a specific label and runs a pod-delete experiment. Litmus handles all the complexity of selecting pods, injecting chaos, and cleaning up.

apiVersion: litmuschaos.io/v1alpha1

kind: ChaosEngine

metadata:

name: nginx-chaos

spec:

appinfo:

appns: 'default'

applabel: 'app=nginx'

chaosServiceAccount: litmus-admin

experiments:

- name: pod-delete

spec:

components:

env:

- name: TOTAL_CHAOS_DURATION

value: '30'

- name: CHAOS_INTERVAL

value: '10'

- name: FORCE

value: 'false'

The ChaosEngine targets pods matching the specified labels and runs the experiment for the configured duration.

Chaos Toolkit

Chaos Toolkit is an open-source framework for declarative chaos experiments. You define experiments in YAML, including steady state probes and rollback actions.

This experiment tests how your order service handles slow responses from the payment service. It defines steady state, injects latency, waits, and then rolls back the change.

# experiment.yaml

version: 1.0.0

title: "What happens when the payment service is slow?"

description: "Verify order service handles payment latency gracefully"

steady-state-hypothesis:

title: "Order creation remains functional"

probes:

- type: probe

name: order-creation-works

tolerance: true

provider:

type: http

url: "http://order-service/health"

expected_status: 200

method:

- type: action

name: add-latency-to-payment-service

provider:

type: process

path: "tc"

arguments: "qdisc add dev eth0 root netem delay 500ms"

pauses:

after: 60

rollbacks:

- type: action

name: remove-latency

provider:

type: process

path: "tc"

arguments: "qdisc del dev eth0 root netem"

The experiment first checks steady state, then injects the failure, waits, and finally rolls back. If steady state is violated during the experiment, you have discovered a weakness.

Common Experiments

Instance Failure

Testing instance failure verifies that your service can survive the loss of individual machines. This is the most basic chaos experiment and should be a starting point for any organization.

This command randomly terminates one instance from your production environment. Run this during business hours when you can respond to any unexpected issues.

# Terminate random instance

aws ec2 terminate-instances --instance-ids $(

aws ec2 describe-instances \

--filters "Name=tag:Environment,Values=production" \

--query "Reservations[].Instances[].InstanceId" \

--output text | shuf -n 1

)

Network Partition

Network partitions test what happens when services cannot communicate. This reveals hidden dependencies and timeout configurations that might cause cascading failures.

These iptables rules block all traffic to and from a specific subnet. Remember to remove the rules after your experiment completes.

# Block traffic between services

iptables -A INPUT -s 10.0.1.0/24 -j DROP

iptables -A OUTPUT -d 10.0.1.0/24 -j DROP

# After experiment

iptables -D INPUT -s 10.0.1.0/24 -j DROP

iptables -D OUTPUT -d 10.0.1.0/24 -j DROP

Dependency Failure

Simulating dependency failures tests your fallback logic. This middleware demonstrates how to inject failures into HTTP communication without modifying application code.

This middleware intercepts requests to external services and injects configurable latency and failure rates. You can adjust the configuration at runtime to run experiments without redeploying.

class ChaosMiddleware

{

private array $config = [

'payment-service' => ['failure_rate' => 0.1, 'latency_ms' => 0],

'inventory-service' => ['failure_rate' => 0, 'latency_ms' => 500],

];

public function handle(Request $request, Closure $next)

{

$service = $request->header('X-Target-Service');

if (isset($this->config[$service])) {

$config = $this->config[$service];

// Inject latency

if ($config['latency_ms'] > 0) {

usleep($config['latency_ms'] * 1000);

}

// Inject failures

if (mt_rand(1, 100) / 100 <= $config['failure_rate']) {

return response()->json(['error' => 'Service unavailable'], 503);

}

}

return $next($request);

}

}

This approach lets you test failure handling without actually breaking dependencies.

Resource Exhaustion

Resource exhaustion tests verify that your system degrades gracefully under pressure. These experiments reveal whether your application handles resource constraints or crashes hard.

The stress-ng tool provides various ways to exhaust system resources. Use these commands to test how your application behaves under CPU, memory, and I/O pressure.

# CPU stress

stress-ng --cpu 4 --timeout 60s

# Memory stress

stress-ng --vm 2 --vm-bytes 1G --timeout 60s

# Disk I/O stress

stress-ng --io 4 --timeout 60s

# Fill disk

fallocate -l 10G /tmp/disk-fill-test

Building Resilience

Circuit Breakers

Chaos experiments often reveal the need for circuit breakers. When a dependency fails, circuit breakers prevent cascading failures by failing fast rather than waiting for timeouts.

This payment service wraps external calls in a circuit breaker. When the circuit opens due to failures, it executes the fallback instead of waiting for timeouts.

class PaymentService

{

private CircuitBreaker $circuitBreaker;

public function processPayment(Order $order): PaymentResult

{

return $this->circuitBreaker->call(function () use ($order) {

return $this->paymentGateway->charge($order);

}, function () use ($order) {

// Fallback when circuit is open

return $this->queueForLaterProcessing($order);

});

}

}

Graceful Degradation

Well-designed systems have multiple fallback layers. When the primary approach fails, they degrade to less optimal but still functional alternatives.

This service demonstrates cascading fallbacks. Each catch block handles a specific failure type and falls back to a less optimal but still functional data source.

class ProductCatalogService

{

public function getProducts(): Collection

{

try {

return $this->fetchFromPrimaryDatabase();

} catch (DatabaseException $e) {

Log::warning('Primary database unavailable, using cache');

return $this->fetchFromCache();

} catch (CacheException $e) {

Log::warning('Cache unavailable, returning static catalog');

return $this->getStaticCatalog();

}

}

}

Each fallback provides progressively less current data, but the service remains available.

Retry with Backoff

Transient failures often resolve themselves. Retry logic with exponential backoff handles these cases without overwhelming recovering services.

This client automatically retries transient errors with exponential backoff. The random jitter prevents synchronized retry storms when many clients fail simultaneously.

class ResilientHttpClient

{

public function request(string $method, string $url, array $options = []): Response

{

$attempts = 0;

$maxAttempts = 3;

$baseDelay = 100; // ms

while (true) {

try {

return $this->client->request($method, $url, $options);

} catch (TransientException $e) {

$attempts++;

if ($attempts >= $maxAttempts) {

throw $e;

}

$delay = $baseDelay * pow(2, $attempts) + mt_rand(0, 100);

usleep($delay * 1000);

}

}

}

}

The random jitter prevents thundering herd problems when many clients retry simultaneously.

Running Experiments Safely

Pre-Experiment Checklist

Thorough preparation prevents chaos experiments from becoming actual incidents. Review this checklist before every experiment.

## Experiment: Database Replica Failure

### Pre-Checks

- [ ] Steady state metrics captured

- [ ] Rollback procedure documented

- [ ] Team notified

- [ ] Monitoring dashboards open

- [ ] On-call engineer aware

- [ ] Customer support briefed (if needed)

### Abort Conditions

- Error rate > 5%

- Latency p99 > 2s

- Manual abort requested

### Rollback Procedure

1. Stop chaos injection

2. Verify auto-recovery

3. If not recovered in 2 min, restore replica manually

Automated Safety

Automated monitoring and abort logic prevent experiments from causing extended outages. The system continuously checks metrics and stops the experiment if thresholds are exceeded.

This experiment runner demonstrates automated safety controls. It captures baseline metrics, continuously monitors during the experiment, and aborts automatically if thresholds are exceeded.

class ExperimentRunner

{

public function run(Experiment $experiment): ExperimentResult

{

// Capture baseline

$baseline = $this->captureMetrics();

// Start experiment

$experiment->start();

// Monitor continuously

while ($experiment->isRunning()) {

$current = $this->captureMetrics();

if ($this->exceedsThresholds($current, $experiment->thresholds())) {

Log::warning('Aborting experiment: thresholds exceeded');

$experiment->abort();

break;

}

sleep(5);

}

// Capture final state

$final = $this->captureMetrics();

return new ExperimentResult($baseline, $final, $experiment->findings());

}

}

This ensures experiments are self-limiting even if someone is not actively watching.

GameDays

Structured Chaos Events

GameDays are planned events where teams practice responding to large-scale failures. They build muscle memory for incident response and reveal gaps in procedures.

This GameDay plan outlines a regional failover exercise. Multiple teams participate, with defined success criteria and a clear timeline for the exercise.

## GameDay: Regional Failover

### Scenario

AWS us-east-1 experiences a major outage

### Teams Involved

- Platform Engineering

- Application Development

- Customer Support

- Communications

### Timeline

09:00 - Briefing and setup

09:30 - Inject failure (block us-east-1 traffic)

09:45 - Observe automatic failover

10:00 - Verify customer-facing services

10:30 - Begin recovery

11:00 - Full restoration

11:30 - Retrospective

### Success Criteria

- Failover completes in < 5 minutes

- No data loss

- < 1% error rate during failover

Metrics to Track

Comprehensive metrics capture lets you understand the full impact of experiments. Track not just technical indicators but also business impact.

This metrics class captures both technical and business indicators. Understanding revenue impact helps communicate the value of resilience investments to stakeholders.

class ChaosMetrics

{

public function capture(): array

{

return [

// Availability

'uptime_percentage' => $this->calculateUptime(),

'successful_requests' => $this->getSuccessCount(),

'failed_requests' => $this->getFailureCount(),

// Latency

'latency_p50' => $this->getPercentile(50),

'latency_p95' => $this->getPercentile(95),

'latency_p99' => $this->getPercentile(99),

// Recovery

'time_to_detect' => $this->getDetectionTime(),

'time_to_recover' => $this->getRecoveryTime(),

// Business Impact

'orders_affected' => $this->getAffectedOrders(),

'revenue_impact' => $this->calculateRevenueImpact(),

];

}

}

Business metrics help communicate the value of resilience investments to stakeholders.

Conclusion

Chaos engineering transforms system reliability from hope to confidence. Start small with non-critical systems, build automated safety controls, and gradually increase experiment scope. The goal isn't to cause outages but to discover weaknesses before they cause real incidents. Document findings, fix issues, and run experiments again to verify improvements. Regular chaos experiments build systems that handle failure gracefully.