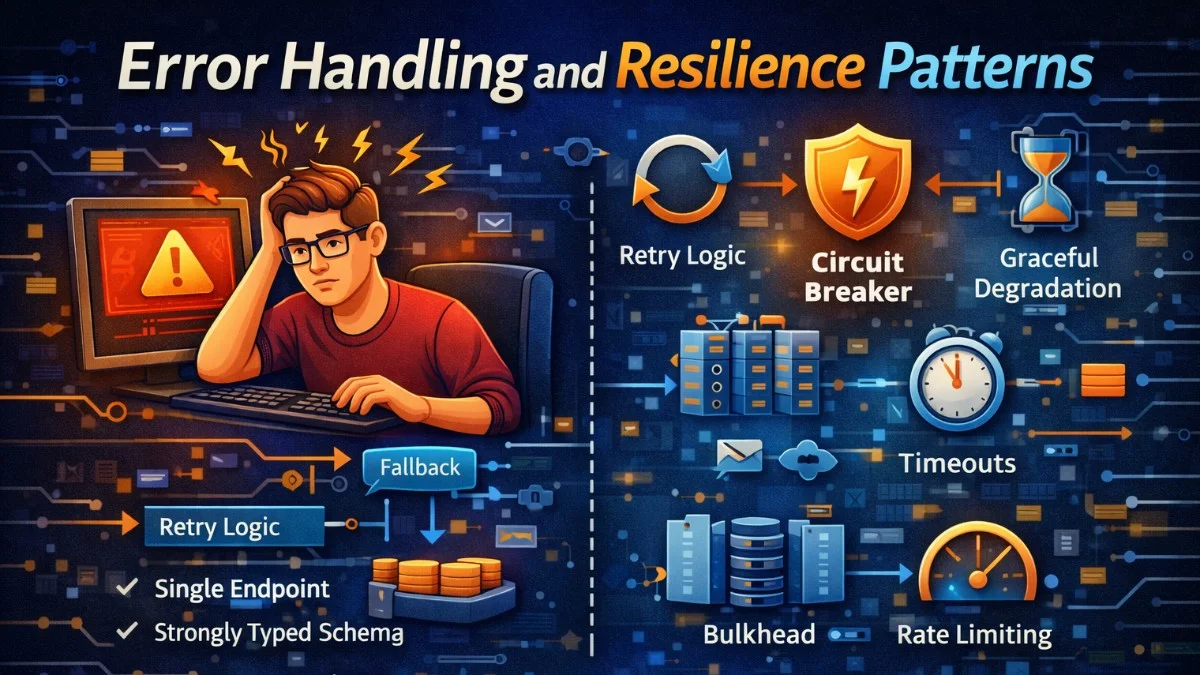

Failures are inevitable in distributed systems. Networks partition, services become unavailable, and databases hit capacity limits. Building resilient applications means expecting failures and handling them gracefully. This guide covers patterns for building applications that degrade gracefully instead of failing catastrophically.

The Cost of Poor Error Handling

When errors aren't handled well:

- One failing service brings down the entire system

- Retry storms overwhelm recovering services

- Users see cryptic error messages

- Debugging becomes nearly impossible

Defensive Programming

Fail Fast

Detect problems early and fail clearly:

The fail-fast principle means validating inputs at the boundary of your function before doing any work. This approach surfaces bugs quickly and produces clear error messages that pinpoint exactly what went wrong. By checking preconditions immediately, you avoid wasting resources on operations that are destined to fail.

public function processOrder(array $data): Order

{

// Validate early

if (!isset($data['customer_id'])) {

throw new InvalidArgumentException('Customer ID is required');

}

$customer = Customer::find($data['customer_id']);

if (!$customer) {

throw new CustomerNotFoundException($data['customer_id']);

}

// Proceed with valid data

return $this->createOrder($customer, $data);

}

Notice how each validation step has a specific exception type. This makes it easy to handle different failure modes appropriately in calling code, whether that means showing a user-friendly message or triggering an alert.

Null Object Pattern

Return usable defaults instead of null:

Instead of scattering null checks throughout your codebase, the Null Object pattern provides a default implementation that behaves sensibly. This eliminates entire categories of null reference errors and makes your code more readable by removing defensive conditionals.

// Instead of

$settings = $user->settings; // Might be null

$theme = $settings?->theme ?? 'light'; // Defensive checks everywhere

// Use a null object

class NullSettings extends Settings

{

public string $theme = 'light';

public string $language = 'en';

public bool $notifications = true;

}

// In User model

public function getSettingsAttribute(): Settings

{

return $this->settings ?? new NullSettings();

}

// Clean usage

$theme = $user->settings->theme; // Always works

The NullSettings class provides sensible defaults for all properties. Any code accessing user settings can trust that the object exists and has valid values, simplifying every consumer of this API.

Guard Clauses

Exit early from invalid states:

Guard clauses flatten your code by handling edge cases first and returning early. This eliminates deep nesting and makes the main logic path obvious. When you read a function with guard clauses, you can quickly skip past the precondition checks to find the core logic.

public function sendNotification(User $user, string $message): void

{

if (!$user->notifications_enabled) {

return;

}

if (!$user->email_verified) {

Log::info('Skipping notification for unverified user', ['user_id' => $user->id]);

return;

}

if (empty($message)) {

throw new InvalidArgumentException('Message cannot be empty');

}

// Main logic here, no nesting

$this->notificationService->send($user, $message);

}

Each guard clause handles one specific condition. The actual notification logic at the bottom is clear and uncluttered because all preconditions have already been verified. You can read straight down to understand what the function does.

Circuit Breaker Pattern

Prevent cascading failures by stopping requests to failing services.

Implementation

The circuit breaker tracks failures and opens the circuit when a threshold is exceeded, preventing further requests to a failing service. After a recovery timeout, it allows a single test request through to check if the service has recovered. This pattern is essential for maintaining stability in microservices architectures where one failing dependency could bring down your entire system.

class CircuitBreaker

{

private const STATE_CLOSED = 'closed';

private const STATE_OPEN = 'open';

private const STATE_HALF_OPEN = 'half_open';

public function __construct(

private string $service,

private int $failureThreshold = 5,

private int $recoveryTimeout = 30

) {}

public function execute(callable $operation): mixed

{

$state = $this->getState();

if ($state === self::STATE_OPEN) {

if ($this->shouldAttemptRecovery()) {

$this->setState(self::STATE_HALF_OPEN);

} else {

throw new CircuitOpenException("Circuit is open for {$this->service}");

}

}

try {

$result = $operation();

$this->recordSuccess();

return $result;

} catch (Exception $e) {

$this->recordFailure();

throw $e;

}

}

private function recordFailure(): void

{

$failures = Cache::increment("circuit:{$this->service}:failures");

if ($failures >= $this->failureThreshold) {

$this->setState(self::STATE_OPEN);

Cache::put("circuit:{$this->service}:opened_at", now()->timestamp, 3600);

}

}

private function recordSuccess(): void

{

if ($this->getState() === self::STATE_HALF_OPEN) {

$this->setState(self::STATE_CLOSED);

}

Cache::forget("circuit:{$this->service}:failures");

}

private function shouldAttemptRecovery(): bool

{

$openedAt = Cache::get("circuit:{$this->service}:opened_at", 0);

return (now()->timestamp - $openedAt) > $this->recoveryTimeout;

}

}

The three states work together: CLOSED allows all requests, OPEN blocks all requests, and HALF_OPEN allows one test request to determine if the circuit should close again. Using the cache for state storage makes this work across multiple application instances.

Usage

Wrap your external service calls with the circuit breaker. When the circuit opens, you can immediately fall back to alternative behavior instead of waiting for timeouts. This keeps your application responsive even when dependencies are struggling.

$circuit = new CircuitBreaker('payment-gateway');

try {

$result = $circuit->execute(function () use ($payment) {

return $this->paymentGateway->charge($payment);

});

} catch (CircuitOpenException $e) {

// Fallback behavior

return $this->queuePaymentForLater($payment);

}

Retry Strategies

Exponential Backoff

When transient failures occur, retrying immediately often fails again. Exponential backoff spaces out retries with increasing delays, giving the failing service time to recover. This is particularly important for network issues, rate limiting, and temporary overload conditions.

class RetryHandler

{

public function execute(

callable $operation,

int $maxAttempts = 3,

int $baseDelay = 100 // milliseconds

): mixed {

$attempts = 0;

while (true) {

try {

return $operation();

} catch (RetryableException $e) {

$attempts++;

if ($attempts >= $maxAttempts) {

throw $e;

}

// Exponential backoff with jitter

$delay = $baseDelay * pow(2, $attempts - 1);

$jitter = rand(0, $delay / 2);

usleep(($delay + $jitter) * 1000);

}

}

}

}

The jitter (random delay component) prevents thundering herd problems where many clients retry simultaneously after a failure. Without jitter, coordinated retries can overwhelm a recovering service.

With Circuit Breaker

Combining retry logic with circuit breakers gives you the best of both worlds: retries handle transient failures while the circuit breaker prevents cascading failures during extended outages. The retry handler works inside the circuit breaker, so you get automatic protection when a service is consistently failing.

public function callExternalApi(array $data): array

{

$circuit = new CircuitBreaker('external-api');

$retry = new RetryHandler();

return $circuit->execute(function () use ($data, $retry) {

return $retry->execute(function () use ($data) {

return Http::timeout(5)->post('https://api.external.com', $data)->throw()->json();

});

});

}

Timeout Handling

Set Appropriate Timeouts

Always set explicit timeouts for external calls. Without timeouts, a hanging service can exhaust your application's resources as requests pile up waiting indefinitely. Choose timeout values based on expected response times plus a reasonable buffer for network variability.

// HTTP requests

$response = Http::timeout(5) // Connection timeout

->connectTimeout(2) // Initial connection

->post($url, $data);

// Database queries

DB::statement('SET statement_timeout = 5000'); // PostgreSQL: 5 seconds

// Queue jobs

class ProcessOrder implements ShouldQueue

{

public $timeout = 120; // 2 minutes max

}

Timeout with Fallback

When a timeout occurs, gracefully degrade to cached or default data rather than failing the entire request. Your users would rather see slightly stale recommendations than an error page.

public function getRecommendations(User $user): Collection

{

try {

return Cache::remember("recs:{$user->id}", 300, function () use ($user) {

return Http::timeout(2)

->get("http://recommendation-service/users/{$user->id}")

->json();

});

} catch (ConnectionException $e) {

// Return cached or default recommendations

return $this->getDefaultRecommendations();

}

}

The cache layer serves double duty here: it improves performance during normal operation and provides a fallback during service unavailability. Consider logging these fallback events to track service health.

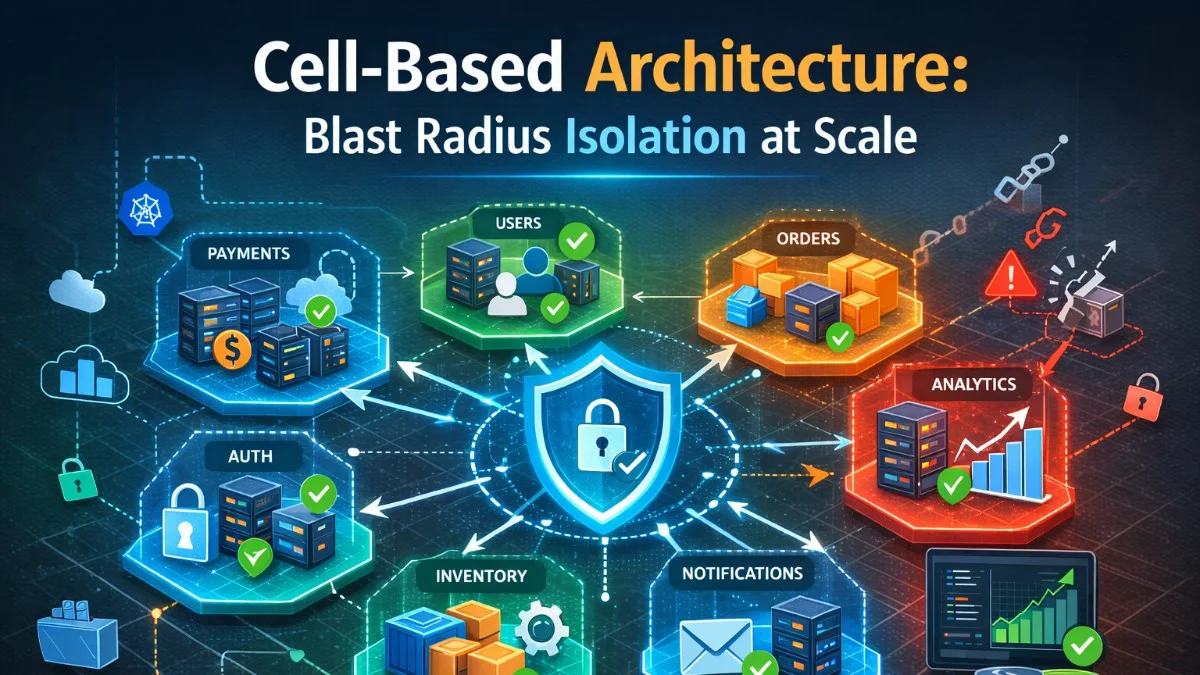

Bulkhead Pattern

Isolate failures to prevent them from spreading.

Resource Pools

Separate database connection pools prevent a runaway query in one part of your application from exhausting connections needed by other parts. This isolation ensures that a problem with report generation, for example, won't prevent web requests from being served.

// Separate connection pools for different workloads

// config/database.php

'connections' => [

'mysql_web' => [

// For web requests

'pool' => ['min' => 5, 'max' => 20],

],

'mysql_jobs' => [

// For background jobs

'pool' => ['min' => 2, 'max' => 10],

],

'mysql_reports' => [

// For heavy reports

'pool' => ['min' => 1, 'max' => 5],

],

],

Separate Queues

Use dedicated queues for different job priorities. This ensures critical operations like payment processing aren't blocked by a backlog of marketing emails. Each queue can have its own workers and scaling configuration.

// Critical operations

PaymentJob::dispatch($order)->onQueue('payments');

// Non-critical operations

SendMarketingEmailJob::dispatch($user)->onQueue('marketing');

// Heavy operations

GenerateReportJob::dispatch($report)->onQueue('reports');

Graceful Degradation

Feature Fallbacks

Design your application to continue functioning even when auxiliary services are unavailable. Core functionality should never depend on optional features. Think about what the minimum viable experience looks like and build toward that baseline.

public function getProductDetails(int $productId): array

{

$product = Product::findOrFail($productId);

// Try to get reviews from review service

try {

$reviews = $this->reviewService->getForProduct($productId);

} catch (Exception $e) {

Log::warning('Review service unavailable', ['product_id' => $productId]);

$reviews = []; // Show product without reviews

}

// Try to get personalized recommendations

try {

$recommendations = $this->recommendationService->getRelated($productId);

} catch (Exception $e) {

Log::warning('Recommendation service unavailable');

$recommendations = $this->getDefaultRecommendations();

}

return compact('product', 'reviews', 'recommendations');

}

Each external service call is wrapped independently. A failure in the recommendation service doesn't prevent reviews from loading, and neither prevents the core product data from displaying. Users get the best available experience.

Read-Only Mode

During database issues or maintenance, serve read-only content rather than showing error pages. This middleware pattern lets you gracefully handle write restrictions while keeping your application available.

class ReadOnlyMiddleware

{

public function handle(Request $request, Closure $next): Response

{

if (config('app.read_only') && !$request->isMethod('GET')) {

return response()->json([

'error' => 'System is in read-only mode for maintenance',

'retry_after' => config('app.read_only_until'),

], 503);

}

return $next($request);

}

}

Error Responses

Consistent Error Format

Standardize your API error responses so clients can handle errors programmatically. Include machine-readable codes alongside human-readable messages. A consistent format makes it easier to build robust client applications.

class ApiExceptionHandler extends ExceptionHandler

{

public function render($request, Throwable $e): Response

{

if ($request->expectsJson()) {

return response()->json([

'error' => [

'code' => $this->getErrorCode($e),

'message' => $this->getUserMessage($e),

'details' => config('app.debug') ? $e->getMessage() : null,

],

'request_id' => request()->header('X-Request-ID'),

], $this->getStatusCode($e));

}

return parent::render($request, $e);

}

private function getUserMessage(Throwable $e): string

{

return match(true) {

$e instanceof ValidationException => 'Invalid input provided',

$e instanceof AuthenticationException => 'Authentication required',

$e instanceof ModelNotFoundException => 'Resource not found',

$e instanceof ThrottleException => 'Too many requests',

default => 'An unexpected error occurred',

};

}

}

The request_id header enables log correlation, making it easy to find related log entries when debugging user-reported issues. Always include this when available.

Include Actionable Information

Error responses should help users understand what went wrong and how to fix it. Include suggestions and links to documentation when possible. The goal is to make errors self-service whenever feasible.

{

"error": {

"code": "PAYMENT_FAILED",

"message": "Payment could not be processed",

"details": {

"reason": "insufficient_funds",

"suggestion": "Please try a different payment method"

}

},

"links": {

"help": "https://docs.example.com/errors/payment-failed",

"support": "https://example.com/support"

}

}

Logging for Debuggability

Structured Error Logging

Log errors with full context so you can reconstruct what happened without access to the original request. Structured logging makes these entries searchable and analyzable with tools like Elasticsearch or Datadog.

try {

$result = $this->processPayment($order);

} catch (PaymentException $e) {

Log::error('Payment processing failed', [

'order_id' => $order->id,

'customer_id' => $order->customer_id,

'amount' => $order->total,

'payment_method' => $order->payment_method,

'gateway_response' => $e->getGatewayResponse(),

'exception' => [

'class' => get_class($e),

'message' => $e->getMessage(),

'code' => $e->getCode(),

],

]);

throw $e;

}

Including the exception class helps distinguish between different failure modes when reviewing logs, while the gateway response provides the external service's perspective on what failed. This context is invaluable during incident response.

Correlation IDs

Track requests across services with a correlation ID. This single identifier links all log entries for a request, even as it crosses service boundaries. When debugging distributed systems, correlation IDs are essential.

// Track request across services

$correlationId = request()->header('X-Correlation-ID', Str::uuid()->toString());

Log::shareContext(['correlation_id' => $correlationId]);

// Pass to downstream services

Http::withHeaders(['X-Correlation-ID' => $correlationId])

->get('http://other-service/api');

When investigating issues, you can search your logs for a correlation ID to see the complete request lifecycle across all services. This transforms debugging from guesswork into methodical analysis.

Testing Resilience

Chaos Engineering

Introduce controlled failures in non-production environments to verify your error handling works as expected. This proactive approach finds weaknesses before your users do.

// Randomly fail in non-production

public function maybeInjectFailure(): void

{

if (app()->environment('staging') && rand(1, 100) <= 5) {

throw new Exception('Chaos monkey strikes!');

}

}

Test Failure Scenarios

Write explicit tests for failure cases. These tests verify your fallback behavior and error responses work correctly. Your test suite should include unhappy paths, not just success cases.

public function test_handles_payment_gateway_timeout()

{

Http::fake([

'payment-gateway.com/*' => Http::response([], 500),

]);

$response = $this->postJson('/api/orders', $this->validOrderData);

$response->assertStatus(503)

->assertJson(['error' => ['code' => 'PAYMENT_UNAVAILABLE']]);

}

Testing the unhappy path is just as important as testing success cases. Your users will encounter failures; make sure you've thought through how those failures manifest and that your application handles them gracefully.

Conclusion

Resilient applications expect failures and handle them gracefully. Use circuit breakers to prevent cascading failures, implement retry with backoff for transient errors, and design for graceful degradation when services are unavailable. Good error handling improves user experience and makes debugging easier when things go wrong.