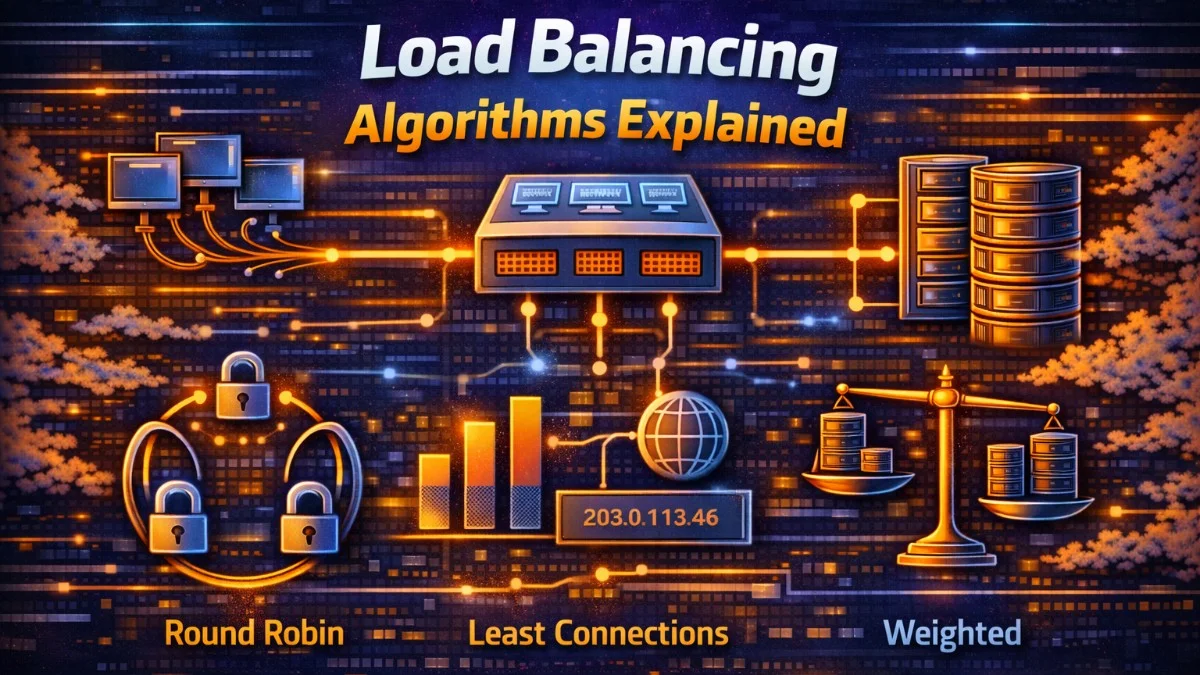

Load balancing distributes traffic across multiple servers to improve availability, reliability, and performance. The algorithm that chooses which server handles each request significantly impacts how well this distribution works. Different algorithms suit different scenarios, and understanding their tradeoffs helps you configure load balancing appropriately.

The ideal algorithm distributes load evenly, responds to server health, minimizes latency, and respects any constraints like session affinity. No single algorithm optimizes all these goals simultaneously, so choosing an algorithm requires understanding your workload's characteristics and priorities.

Round Robin

Round robin is the simplest algorithm: requests go to servers in rotation. Server 1, then server 2, then server 3, then back to server 1. Every server receives the same number of requests over time.

This implementation shows the core logic. The modulo operator ensures you cycle back to the first server after reaching the last.

class RoundRobinBalancer:

def __init__(self, servers):

self.servers = servers

self.current = 0

def next_server(self):

server = self.servers[self.current]

self.current = (self.current + 1) % len(self.servers)

return server

Round robin works well when servers have equal capacity and requests have similar costs. It's simple, predictable, and adds no overhead. Most load balancers default to round robin because it handles common cases well.

Round robin fails when assumptions don't hold. If one server is slower than others, it receives the same request rate but can't process them as quickly. Requests queue up; latency increases; eventually the server becomes overloaded while faster servers sit partially idle. Similarly, if some requests are more expensive than others, unlucky servers might receive disproportionate work.

Weighted Round Robin

Weighted round robin extends round robin to handle servers with different capacities. Servers with higher weights receive proportionally more requests. A server with weight 3 receives three times as many requests as a server with weight 1.

The following implementation uses a smooth weighted round robin algorithm. Rather than sending three consecutive requests to a weight-3 server, it interleaves requests for more even distribution.

class WeightedRoundRobinBalancer:

def __init__(self, servers):

# servers: [(server, weight), ...]

self.servers = servers

self.weights = [w for _, w in servers]

self.current_weights = [0] * len(servers)

def next_server(self):

# Add weights to current weights

for i in range(len(self.servers)):

self.current_weights[i] += self.weights[i]

# Select server with highest current weight

max_idx = self.current_weights.index(max(self.current_weights))

# Reduce selected server's current weight

self.current_weights[max_idx] -= sum(self.weights)

return self.servers[max_idx][0]

The algorithm maintains running totals that ensure requests distribute smoothly according to weights. This prevents bursts of traffic to any single server.

Weights can be set based on server specifications (CPU, memory), observed capacity, or deployment configuration. When auto-scaling adds instances of different sizes, weights ensure appropriate distribution.

The challenge is setting weights correctly. Manual weight configuration doesn't adapt to changing conditions. A server experiencing issues might still have high weight. Some systems dynamically adjust weights based on observed performance.

Least Connections

Least connections routes requests to the server with fewest active connections. This naturally adapts to server capacity and request cost. A fast server completes requests quickly, reducing its connection count and attracting more requests. A slow server accumulates connections and receives fewer new requests.

This implementation tracks active connections and requires you to call release() when a request completes.

class LeastConnectionsBalancer:

def __init__(self, servers):

self.servers = servers

self.connections = {server: 0 for server in servers}

def next_server(self):

# Find server with minimum connections

server = min(self.servers, key=lambda s: self.connections[s])

self.connections[server] += 1

return server

def release(self, server):

self.connections[server] -= 1

Least connections handles variable request costs better than round robin. Long-running requests don't cause imbalance because they maintain their connection count. The algorithm naturally accounts for server speed differences without explicit configuration.

The drawback is that connection count imperfectly proxies for load. A server might have many idle connections from keep-alive while actually being lightly loaded. Conversely, a server might have few connections but each consuming significant CPU.

Weighted Least Connections

Weighted least connections combines both approaches. It considers both connection count and server weight, routing to servers that have low connections relative to their capacity. This gives you the adaptability of least connections with explicit capacity hints.

class WeightedLeastConnectionsBalancer:

def __init__(self, servers):

# servers: [(server, weight), ...]

self.servers = servers

self.connections = {server: 0 for server, _ in servers}

self.weights = {server: weight for server, weight in servers}

def next_server(self):

# Calculate connections/weight ratio for each server

def ratio(server):

return self.connections[server] / self.weights[server]

server = min([s for s, _ in self.servers], key=ratio)

self.connections[server] += 1

return server

The ratio calculation ensures a high-capacity server can handle proportionally more connections before being considered "busy."

This algorithm handles heterogeneous server capacity while still adapting to actual load. It's often the best choice for general-purpose load balancing when your servers have different specifications.

IP Hash

IP hash uses the client's IP address to consistently route them to the same server. Hash the IP address and use the result to select a server. The same client always reaches the same server (unless servers change).

class IPHashBalancer:

def __init__(self, servers):

self.servers = servers

def next_server(self, client_ip):

hash_value = hash(client_ip)

index = hash_value % len(self.servers)

return self.servers[index]

IP hash provides "sticky" sessions without explicit session state. Useful for applications that benefit from repeated requests hitting the same server, like in-memory caches or stateful connections.

The limitation is that IP addresses don't correspond one-to-one with users. Many users might share an IP (corporate NAT, mobile carriers), causing imbalanced distribution. Others might have changing IPs, defeating the stickiness.

Consistent Hashing

Consistent hashing improves on simple hashing by minimizing redistribution when servers change. When a server is added or removed, only requests that mapped to that server move. Other mappings remain stable.

This implementation uses virtual nodes to improve distribution across the hash ring.

import hashlib

import bisect

class ConsistentHashBalancer:

def __init__(self, servers, virtual_nodes=100):

self.ring = []

self.server_map = {}

for server in servers:

for i in range(virtual_nodes):

key = f"{server}:{i}"

hash_value = self._hash(key)

self.ring.append(hash_value)

self.server_map[hash_value] = server

self.ring.sort()

def _hash(self, key):

return int(hashlib.md5(key.encode()).hexdigest(), 16)

def next_server(self, client_key):

if not self.ring:

return None

hash_value = self._hash(client_key)

idx = bisect.bisect(self.ring, hash_value)

if idx == len(self.ring):

idx = 0

return self.server_map[self.ring[idx]]

Virtual nodes improve distribution by mapping each physical server to multiple points on the hash ring. Without virtual nodes, servers might receive unequal portions of the hash space. The bisect module efficiently finds where a key falls in the sorted ring.

Consistent hashing is essential for distributed caches and databases where data is partitioned across servers. Adding a server should move only 1/N of the data, not rehash everything.

Least Response Time

Least response time routes requests to the server responding fastest. It measures actual latency rather than proxy metrics like connection count. This directly optimizes for user-perceived performance.

class LeastResponseTimeBalancer:

def __init__(self, servers):

self.servers = servers

self.response_times = {server: 0 for server in servers}

self.alpha = 0.1 # Smoothing factor

def next_server(self):

return min(self.servers, key=lambda s: self.response_times[s])

def record_response(self, server, response_time):

# Exponential moving average

current = self.response_times[server]

self.response_times[server] = (

self.alpha * response_time +

(1 - self.alpha) * current

)

The exponential moving average smooths out individual request variations while still adapting to changing performance. A lower alpha gives more weight to historical data; a higher alpha responds faster to changes.

This algorithm directly optimizes for what users care about: latency. It naturally adapts to server performance, network conditions, and request costs.

The challenge is measuring response time accurately across all servers. A server receiving no traffic has no recent response time measurement. Some implementations send health check requests specifically to measure latency, even to servers not receiving user traffic.

Choosing an Algorithm

For homogeneous servers with uniform requests, round robin is simple and effective. Most situations start here.

For heterogeneous servers, weighted round robin or weighted least connections account for capacity differences.

For variable request costs, least connections adapts to actual load rather than request count.

For session affinity, IP hash or consistent hashing routes related requests together.

For latency optimization, least response time directly minimizes what users experience.

Many load balancers combine algorithms with health checking. Unhealthy servers are removed from rotation regardless of algorithm. This prevents routing to failed servers while the chosen algorithm optimizes distribution among healthy ones.

Conclusion

Load balancing algorithms distribute traffic across servers with different tradeoffs. Round robin is simple but assumes uniformity. Least connections adapts to actual load. Consistent hashing provides stability across server changes. Least response time optimizes for latency.

The right algorithm depends on your workload characteristics: server heterogeneity, request variability, session requirements, and performance priorities. Many load balancers allow configuring algorithms per service, matching each workload to an appropriate strategy.