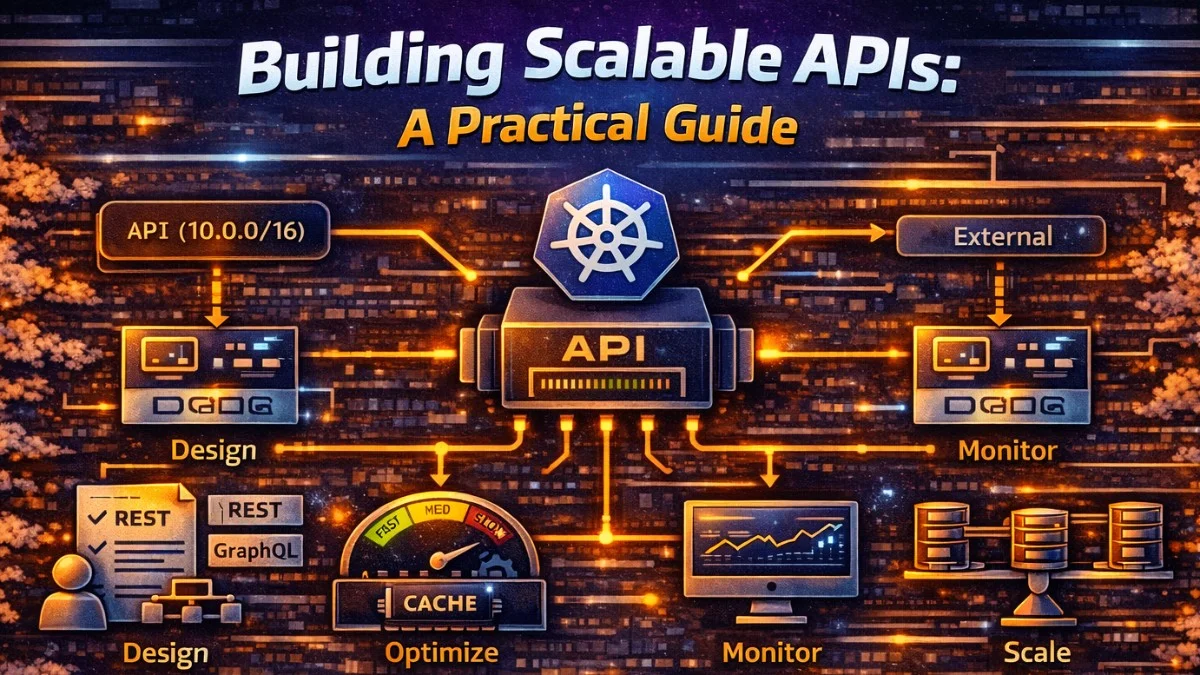

Building an API that works is straightforward. Building one that continues to work as your user base grows from hundreds to millions requires deliberate design decisions from the start. This guide covers practical techniques for creating APIs that scale gracefully.

Why Scalability Matters Early

It's tempting to think scalability is a problem for later;after you've found product-market fit, after you have paying customers, after the investors come through. But architectural decisions made early become increasingly expensive to change.

An API designed for scale doesn't have to be over-engineered. It simply follows patterns that avoid common bottlenecks. The cost of implementing these patterns upfront is minimal compared to rewriting critical systems under pressure when your application starts failing.

RESTful Design Principles

REST isn't just about using HTTP verbs correctly, though that matters. Scalable REST APIs follow consistent patterns that make them predictable and cacheable.

Resource naming should be intuitive and hierarchical. When designing your endpoint structure, think about how clients will discover and navigate your resources. The following pattern demonstrates clean, predictable URLs that follow REST conventions. You'll notice that each resource is a noun, and the HTTP method communicates the action being performed:

GET /projects # List projects

GET /projects/123 # Get specific project

GET /projects/123/tasks # Get tasks for a project

POST /projects/123/tasks # Create a task in a project

This consistency means clients can predict your API structure without consulting documentation for every endpoint.

Use nouns for resources, not verbs. The HTTP method provides the verb. Avoid patterns like /getProjects or /createTask;these ignore what makes REST effective.

Keep your URL structure shallow. Deep nesting like /clients/1/projects/2/tasks/3/comments/4 becomes unwieldy. Beyond two levels, consider top-level resources with filters. This approach gives you the same access pattern while keeping URLs manageable:

GET /comments?task_id=3

You can always add relationship filters without creating deeply nested URLs that become impossible to maintain.

Versioning Strategies

Your API will change. How you handle those changes determines whether existing clients break.

URL versioning is the most explicit approach. You'll see the version in every request, which simplifies debugging when clients report issues:

/api/v1/projects

/api/v2/projects

This makes the version visible in every request, which aids debugging and documentation. The downside is that clients must update URLs to use new versions.

Header versioning keeps URLs clean. This approach is more RESTful in principle, placing version information in the Accept header where metadata belongs:

Accept: application/vnd.api+json; version=2

This is more RESTful in principle but harder to test casually (you can't just paste a URL in a browser).

Whichever you choose, maintain backward compatibility within a version. Adding fields is fine. Removing or renaming fields requires a new version.

Pagination Done Right

Returning unlimited results is a scalability time bomb. Even if your database handles it today, it won't when you have 100,000 records.

Cursor-based pagination scales better than offset pagination. The cursor encodes the position in your dataset, typically using a base64-encoded identifier or timestamp. Here's what a cursor-paginated response looks like. You'll want to include both the cursor for the next page and a flag indicating whether more data exists:

{

"data": [...],

"meta": {

"next_cursor": "eyJpZCI6MTAwfQ==",

"has_more": true

}

}

Clients use the next_cursor value to request the next page, and the has_more flag tells them when they've reached the end.

Offset pagination (?page=50) requires the database to skip records, which becomes slower as the offset increases. Cursor pagination uses indexed lookups, maintaining consistent performance regardless of position.

Provide sensible defaults and maximum limits. Clients shouldn't need to specify pagination parameters for typical use cases, but you should protect your server from expensive queries. Here's how you can implement flexible limits with built-in safeguards:

GET /projects # Returns 25 items (default)

GET /projects?limit=50 # Returns 50 items

GET /projects?limit=1000 # Returns 100 items (enforced maximum)

Notice how the last example silently caps at 100 rather than returning an error. This prevents accidental denial-of-service from clients requesting too much data.

Filtering and Sorting

Allow clients to request only what they need. This reduces bandwidth, speeds responses, and lowers database load.

Field selection (sparse fieldsets) lets clients specify which fields to return. This is particularly valuable for mobile clients or bandwidth-constrained environments where every byte matters:

GET /projects?fields=id,name,status

Filtering narrows the result set. Support the fields your clients commonly filter on. You can start simple and add more filter options as client needs emerge:

GET /projects?status=active&client_id=123

For complex filters, consider a structured query parameter. This approach provides consistency and makes it clear which parameters are filters versus other query options like pagination or field selection:

GET /projects?filter[status]=active&filter[created_after]=2024-01-01

The bracket notation groups related parameters together, making your API more self-documenting.

Sorting should be explicit and consistent. The convention of prefixing with a minus sign for descending order is widely understood among API consumers:

GET /projects?sort=-created_at,name # Descending created_at, then ascending name

Caching Strategies

Effective caching is the single biggest factor in API scalability. A request that never hits your server is infinitely scalable.

HTTP caching headers tell clients and intermediaries what can be cached. These two headers work together: Cache-Control sets the rules, and ETag provides a fingerprint for validation. Here's an example of headers you might return for a project resource:

Cache-Control: public, max-age=3600

ETag: "abc123"

The public directive allows CDNs and proxies to cache the response, while max-age=3600 specifies the response is valid for one hour.

Use ETags for conditional requests. Clients send If-None-Match: "abc123", and you return 304 Not Modified if nothing changed. This validates cache freshness without transferring data.

Application-level caching stores computed results. In Laravel, the Cache::remember pattern elegantly handles the cache-miss scenario by only executing the closure when needed. This technique is perfect for expensive database queries or API calls:

$projects = Cache::remember("client:{$clientId}:projects", 3600, function () use ($clientId) {

return Project::where('client_id', $clientId)->get();

});

The cache key includes the client ID to ensure each client's data is cached separately.

Invalidate caches deliberately when data changes. Cache invalidation is genuinely hard;prefer short TTLs over complex invalidation logic when possible.

Rate Limiting

Rate limiting protects your API from abuse and ensures fair resource allocation.

Implement tiered limits based on authentication:

- Anonymous: 60 requests/hour

- Authenticated: 1,000 requests/hour

- Premium: 10,000 requests/hour

Return rate limit information in headers. These headers let well-behaved clients implement backoff strategies before hitting limits. You should include these headers on every response, not just when limits are exceeded:

X-RateLimit-Limit: 1000

X-RateLimit-Remaining: 847

X-RateLimit-Reset: 1640995200

The Reset value is a Unix timestamp indicating when the rate limit window resets.

When limits are exceeded, return 429 Too Many Requests with a Retry-After header.

Database Query Optimization

The database is usually the bottleneck. Efficient queries are essential for scalability.

Always eager load relationships to avoid N+1 queries. The first example below triggers a separate query for each project's client; the second fetches everything in just two queries. This difference becomes dramatic at scale:

// Bad: N+1 queries

$projects = Project::all();

foreach ($projects as $project) {

echo $project->client->name; // Query per project

}

// Good: 2 queries total

$projects = Project::with('client')->get();

With 100 projects, the bad approach executes 101 queries while the good approach executes just 2. You can imagine how this scales with thousands of projects.

Index columns used in WHERE, ORDER BY, and JOIN clauses. Review slow query logs regularly to identify missing indexes.

For read-heavy endpoints, consider read replicas. Direct read queries to replicas while writes go to the primary database.

Monitoring and Observability

You can't optimize what you don't measure.

Log request timing, database query counts, and external service latency. Aggregate this data to identify trends before they become problems.

Set up alerts for anomalies:

- Response times exceeding thresholds

- Error rates above baseline

- Queue depths growing unexpectedly

Distributed tracing helps debug performance issues across services. Tools like Jaeger or AWS X-Ray show where time is spent in complex request flows.

Real-World Scaling Example

Consider an API serving project management data. Initial implementation returns all projects for a client:

GET /projects?client_id=123

This works fine with 50 projects. At 5,000 projects, response times approach 2 seconds.

The scaling journey:

- Add pagination (immediate improvement)

- Add database indexes on

client_idandstatus - Implement response caching with 5-minute TTL

- Add field selection to reduce payload size

- Move to cursor-based pagination for deep pages

Each step provides measurable improvement. Combined, they support 100x the original load without architectural changes.

Conclusion

Scalable APIs aren't built with exotic technology;they're built with disciplined application of proven patterns. Consistent resource design, intelligent caching, efficient database queries, and proper pagination handle most scaling challenges.

Start with these fundamentals. Monitor your actual usage patterns. Optimize based on real data, not assumptions. The best time to think about scale is at the beginning, but the specifics of how you scale should be driven by what you learn as your API grows.